-

Notifications

You must be signed in to change notification settings - Fork 0

/

Copy pathindex.qmd

850 lines (593 loc) · 51.2 KB

/

index.qmd

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

164

165

166

167

168

169

170

171

172

173

174

175

176

177

178

179

180

181

182

183

184

185

186

187

188

189

190

191

192

193

194

195

196

197

198

199

200

201

202

203

204

205

206

207

208

209

210

211

212

213

214

215

216

217

218

219

220

221

222

223

224

225

226

227

228

229

230

231

232

233

234

235

236

237

238

239

240

241

242

243

244

245

246

247

248

249

250

251

252

253

254

255

256

257

258

259

260

261

262

263

264

265

266

267

268

269

270

271

272

273

274

275

276

277

278

279

280

281

282

283

284

285

286

287

288

289

290

291

292

293

294

295

296

297

298

299

300

301

302

303

304

305

306

307

308

309

310

311

312

313

314

315

316

317

318

319

320

321

322

323

324

325

326

327

328

329

330

331

332

333

334

335

336

337

338

339

340

341

342

343

344

345

346

347

348

349

350

351

352

353

354

355

356

357

358

359

360

361

362

363

364

365

366

367

368

369

370

371

372

373

374

375

376

377

378

379

380

381

382

383

384

385

386

387

388

389

390

391

392

393

394

395

396

397

398

399

400

401

402

403

404

405

406

407

408

409

410

411

412

413

414

415

416

417

418

419

420

421

422

423

424

425

426

427

428

429

430

431

432

433

434

435

436

437

438

439

440

441

442

443

444

445

446

447

448

449

450

451

452

453

454

455

456

457

458

459

460

461

462

463

464

465

466

467

468

469

470

471

472

473

474

475

476

477

478

479

480

481

482

483

484

485

486

487

488

489

490

491

492

493

494

495

496

497

498

499

500

501

502

503

504

505

506

507

508

509

510

511

512

513

514

515

516

517

518

519

520

521

522

523

524

525

526

527

528

529

530

531

532

533

534

535

536

537

538

539

540

541

542

543

544

545

546

547

548

549

550

551

552

553

554

555

556

557

558

559

560

561

562

563

564

565

566

567

568

569

570

571

572

573

574

575

576

577

578

579

580

581

582

583

584

585

586

587

588

589

590

591

592

593

594

595

596

597

598

599

600

601

602

603

604

605

606

607

608

609

610

611

612

613

614

615

616

617

618

619

620

621

622

623

624

625

626

627

628

629

630

631

632

633

634

635

636

637

638

639

640

641

642

643

644

645

646

647

648

649

650

651

652

653

654

655

656

657

658

659

660

661

662

663

664

665

666

667

668

669

670

671

672

673

674

675

676

677

678

679

680

681

682

683

684

685

686

687

688

689

690

691

692

693

694

695

696

697

698

699

700

701

702

703

704

705

706

707

708

709

710

711

712

713

714

715

716

717

718

719

720

721

722

723

724

725

726

727

728

729

730

731

732

733

734

735

736

737

738

739

740

741

742

743

744

745

746

747

748

749

750

751

752

753

754

755

756

757

758

759

760

761

762

763

764

765

766

767

768

769

770

771

772

773

774

775

776

777

778

779

780

781

782

783

784

785

786

787

788

789

790

791

792

793

794

795

796

797

798

799

800

801

802

803

804

805

806

807

808

809

810

811

812

813

814

815

816

817

818

819

820

821

822

823

824

825

826

827

828

829

830

831

832

833

834

835

836

837

838

839

840

841

842

843

844

845

846

847

848

849

850

---

format:

revealjs:

code-line-numbers: false

code-link: false

code-copy: false

# callout-appearance: simple

# syntax-definitions:

# - ./docs/python.xml

scrollable: true

title-block-style: none

slide-number: c

title-slide-style: default

chalkboard:

buttons: false

auto-animate: true

reference-location: section

touch: true

pause: false

footnotes-hover: true

citations-hover: true

preview-links: true

controls-tutorial: true

controls: false

logo: "https://raw.githubusercontent.com/saforem2/llm-lunch-talk/main/docs/assets/anl.svg"

history: false

highlight-style: "atom-one"

css:

- css/default.css

- css/callouts-html.css

theme:

- white

- css/light.scss

- css/common.scss

- css/syntax-light.scss

self-contained: false

embed-resources: false

self-contained-math: false

center: true

default-image-extension: svg

code-overflow: scroll

html-math-method: katex

fig-align: center

mermaid:

theme: dark

gfm:

author: Sam Foreman

output-file: "README.md"

---

# {.centeredslide background-image="https://github.com/saforem2/llm-lunch-talk/blob/main/docs/assets/image2.png?raw=true" loading="lazy"}

<!-- # {.centerdslide background-image="https://www.alcf.anl.gov/sites/default/files/2023-08/ALCF-HandsOnHPCWksp-LL.png?itok=6qi5GY6y" height="80%"} -->

<!-- # {.centeredslide background-image="./assets/massstar_science_highlights_2017_01.png" loading="lazy"} -->

<!-- # {.centeredslide background-image="./assets/p62_cover-edit_CMYK.jpg" loading="lazy"} -->

<!-- # {.centeredslide background-image="./assets/tribology_cover_test_image_06m.png" loading="lazy"} -->

<!-- # {.centeredslide background-image="./assets/6120702714_c9a4cf5d78_o.jpg" loading="lazy"} -->

<!-- # {.centeredslide background-image="./assets/ccm_s23-50_Ye_03B.png" loading="lazy"} -->

::: {style="background-color: rgba(8, 42, 123, 0.7); border-radius: 10px; text-align:left; padding: 1.5rem; margin-left: auto; margin-right: auto; line-height: 1.5em!important;"}

::: {style="display:flex;"}

[October 10 -- 12, 2023 $\hspace{5pt}$ {{< fa solid shapes >}}]{style="font-size: 1.75em; font-weight: 600; border-bottom: 1px solid white; color: #F8F8F8"} []{style="display:inline-block;"}

:::

[ALCF Hands-on]{style="font-size: 3.0em; font-weight: 700; color: white"}

<br>

[HPC Workshop]{style="font-size: 3.0em; font-weight: 700; color: #FFFFFF"}

:::

::: footer

::: {style="text-shadow: 2px 2px 3px rgba(0,0,0,0.8); color: #FFFFFF; text-align: left; margin-left: 11%; font-size: 0.9em;"}

<!-- [[{{< bi person-badge >}} Sam Foreman]{style="color:#757575;"}](https://samforeman.me) -->

[[{{< bi easel >}} LLMs on Polaris]{style="color: #757575"}](https://saforem2.github.io/llm-lunch-talk) [\@]{.dim-text} [[{{< bi building >}} ALCF Hands-on HPC Workshop]{style="color: #757575"}](https://github.com/argonne-lcf/ALCF_Hands_on_HPC_Workshop)

:::

:::

# [{{< fa regular id-badge >}}]{.dim-text} [Sam Foreman](https://samforeman.me) {style="font-size: 0.9em;"}

- I'm a Computational Scientist in the [Data Science

Group](https://www.alcf.anl.gov/about/people/group/506) at

[ALCF](https://alcf.anl.gov)[^1].

- Personal Website: [samforeman.me](https://samforeman.me)

- Background: [`{ML, LLMs, AI4Science, HEP, Lattice QCD, MCMC, Generative Modeling, ...}`]{}

[^1]: Mostly getting supercomputers to stop yelling at each other {{< fa solid network-wired >}}

Ongoing / recent work:

:::: {.columns}

::: {.column width="50%"}

- [AI + Science](https://github.com/saforem2/)

- [Building better sampling methods for Lattice

QCD](https://github.com/saforem2/l2hmc-qcd)

- [GenSLMs: Genome-scale language models reveal SARS-CoV-2 evolutionary dynamics](https://www.biorxiv.org/content/10.1101/2022.10.10.511571v2)

- [Foundation models for long term climate

forecasting](https://saforem2.github.io/climate-analysis)

:::

::: {.column width="50%"}

- [Scaling Large Language Models](https://github.com/saforem2/Megatron-DS-Benchmarking)

- [Optimizing distibuted training across thousands of GPUs](https://github.com/argonne-lcf/mlprof)

- Building new parallelism techniques for efficient scaling

- Generative modeling (esp. for physical systems)

:::

::::

# Status of Large Language Models[^slides-gh]

::: {#fig-llms}

Large Language Models have (LLM)s have taken the ~~NLP community~~ **world** by storm[^llm-animation]

:::

[^llm-animation]: [{{< fa brands github >}} `Hannibal046/Awesome-LLM`](https://github.com/Hannibal046/Awesome-LLM)

[^slides-gh]: [{{< fa brands github >}} `saforem2/llm-lunch-talk`](https://github.com/Hannibal046/Awesome-LLM) [(slides)](https://saforem2.github.io/llm-lunch-talk)

# Emergent Abilities {background-color="#FBFBFD"}

::: {width="66%" style="text-align: center;"}

<img src="https://github.com/saforem2/llm-lunch-talk/blob/main/docs/assets/emergent-abilities.gif?raw=true" height="75%" />

[Emergent abilities of Large Language Models](https://arxiv.org/abs/2206.07682) @yao2023tree

:::

# Training LLMs

::: {layout="[ 50, 40 ]" layout-valign="center"}

::: {#fig-evolution}

Visualization from @yang2023harnessing

:::

::: {}

:::

:::

# Recent Work (2017 -- Now) {.scrollable style="max-height: 90%; height: 100%;"}

<details closed><summary><b>Recent Work</b></summary>

::: {.table-responsive width="100%" style="max-height: 550px!important; font-size: 0.7rem;" data-quarto-disable-processing="true"}

| Date | keywords | Institute | Paper | Publication |

| :-----: | :------------------: | :--------------: | :---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- | :---------: |

| 2017-06 | Transformers | Google | [Attention Is All You Need](https://arxiv.org/pdf/1706.03762.pdf) | NeurIPS<br>  |

| 2018-06 | GPT 1.0 | OpenAI | [Improving Language Understanding by Generative Pre-Training](https://www.cs.ubc.ca/~amuham01/LING530/papers/radford2018improving.pdf) |  |

| 2018-10 | BERT | Google | [BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding](https://aclanthology.org/N19-1423.pdf) | NAACL <br> |

| 2019-02 | GPT 2.0 | OpenAI | [Language Models are Unsupervised Multitask Learners](https://d4mucfpksywv.cloudfront.net/better-language-models/language_models_are_unsupervised_multitask_learners.pdf) |  |

| 2019-09 | Megatron-LM | NVIDIA | [Megatron-LM: Training Multi-Billion Parameter Language Models Using Model Parallelism](https://arxiv.org/pdf/1909.08053.pdf) |  |

| 2019-10 | T5 | Google | [Exploring the Limits of Transfer Learning with a Unified Text-to-Text Transformer](https://jmlr.org/papers/v21/20-074.html) | JMLR<br>  |

| 2019-10 | ZeRO | Microsoft | [ZeRO: Memory Optimizations Toward Training Trillion Parameter Models](https://arxiv.org/pdf/1910.02054.pdf) | SC<br>  |

| 2020-01 | Scaling Law | OpenAI | [Scaling Laws for Neural Language Models](https://arxiv.org/pdf/2001.08361.pdf) ||

| 2020-05 | GPT 3.0 | OpenAI | [Language models are few-shot learners](https://papers.nips.cc/paper/2020/file/1457c0d6bfcb4967418bfb8ac142f64a-Paper.pdf) | NeurIPS <br>  |

| 2021-01 | Switch Transformers | Google | [Switch Transformers: Scaling to Trillion Parameter Models with Simple and Efficient Sparsity](https://arxiv.org/pdf/2101.03961.pdf) | JMLR<br>  |

| 2021-08 | Codex | OpenAI | [Evaluating Large Language Models Trained on Code](https://arxiv.org/pdf/2107.03374.pdf) ||

| 2021-08 | Foundation Models | Stanford | [On the Opportunities and Risks of Foundation Models](https://arxiv.org/pdf/2108.07258.pdf) ||

| 2021-09 | FLAN | Google | [Finetuned Language Models are Zero-Shot Learners](https://openreview.net/forum?id=gEZrGCozdqR) | ICLR <br>|

| 2021-10 | T0 | HuggingFace et al. | [Multitask Prompted Training Enables Zero-Shot Task Generalization](https://arxiv.org/abs/2110.08207) | ICLR <br>|

| 2021-12 | GLaM | Google | [GLaM: Efficient Scaling of Language Models with Mixture-of-Experts](https://arxiv.org/pdf/2112.06905.pdf) | ICML<br> |

| 2021-12 | WebGPT | OpenAI | [WebGPT: Browser-assisted question-answering with human feedback](https://www.semanticscholar.org/paper/WebGPT%3A-Browser-assisted-question-answering-with-Nakano-Hilton/2f3efe44083af91cef562c1a3451eee2f8601d22) ||

| 2021-12 | Retro | DeepMind | [Improving language models by retrieving from trillions of tokens](https://www.deepmind.com/publications/improving-language-models-by-retrieving-from-trillions-of-tokens) | ICML<br>  |

| 2021-12 | Gopher | DeepMind | [Scaling Language Models: Methods, Analysis & Insights from Training Gopher](https://arxiv.org/pdf/2112.11446.pdf) ||

| 2022-01 | COT | Google | [Chain-of-Thought Prompting Elicits Reasoning in Large Language Models](https://arxiv.org/pdf/2201.11903.pdf) | NeurIPS<br>|

| 2022-01 | LaMDA | Google | [LaMDA: Language Models for Dialog Applications](https://arxiv.org/pdf/2201.08239.pdf) ||

| 2022-01 | Minerva | Google | [Solving Quantitative Reasoning Problems with Language Models](https://arxiv.org/abs/2206.14858) | NeurIPS<br>  |

| 2022-01 | Megatron-Turing NLG | Microsoft&NVIDIA | [Using DeepSpeed and Megatron to Train Megatron-Turing NLG 530B, A Large-Scale Generative Language Model](https://arxiv.org/pdf/2201.11990.pdf) | |

| 2022-03 | InstructGPT | OpenAI | [Training language models to follow instructions with human feedback](https://arxiv.org/pdf/2203.02155.pdf) ||

| 2022-04 | PaLM | Google | [PaLM: Scaling Language Modeling with Pathways](https://arxiv.org/pdf/2204.02311.pdf) ||

| 2022-04 | Chinchilla | DeepMind | [An empirical analysis of compute-optimal large language model training](https://www.deepmind.com/publications/an-empirical-analysis-of-compute-optimal-large-language-model-training) | NeurIPS<br>  |

| 2022-05 | OPT | Meta | [OPT: Open Pre-trained Transformer Language Models](https://arxiv.org/pdf/2205.01068.pdf) ||

| 2022-05 | UL2 | Google | [Unifying Language Learning Paradigms](https://arxiv.org/abs/2205.05131v1) ||

| 2022-06 | Emergent Abilities | Google | [Emergent Abilities of Large Language Models](https://openreview.net/pdf?id=yzkSU5zdwD) | TMLR<br>|

| 2022-06 | BIG-bench | Google | [Beyond the Imitation Game: Quantifying and extrapolating the capabilities of language models](https://github.com/google/BIG-bench) ||

| 2022-06 | METALM | Microsoft | [Language Models are General-Purpose Interfaces](https://arxiv.org/pdf/2206.06336.pdf) |  |

| 2022-09 | Sparrow | DeepMind | [Improving alignment of dialogue agents via targeted human judgements](https://arxiv.org/pdf/2209.14375.pdf) ||

| 2022-10 | Flan-T5/PaLM | Google | [Scaling Instruction-Finetuned Language Models](https://arxiv.org/pdf/2210.11416.pdf) ||

| 2022-10 | GLM-130B | Tsinghua | [GLM-130B: An Open Bilingual Pre-trained Model](https://arxiv.org/pdf/2210.02414.pdf) | ICLR<br> |

| 2022-11 | HELM | Stanford | [Holistic Evaluation of Language Models](https://arxiv.org/pdf/2211.09110.pdf) ||

| 2022-11 | BLOOM | BigScience | [BLOOM: A 176B-Parameter Open-Access Multilingual Language Model](https://arxiv.org/pdf/2211.05100.pdf) ||

| 2022-11 | Galactica | Meta | [Galactica: A Large Language Model for Science](https://arxiv.org/pdf/2211.09085.pdf) ||

| 2022-12 | OPT-IML | Meta | [OPT-IML: Scaling Language Model Instruction Meta Learning through the Lens of Generalization](https://arxiv.org/pdf/2212.12017) | |

| 2023-01 | Flan 2022 Collection | Google | [The Flan Collection: Designing Data and Methods for Effective Instruction Tuning](https://arxiv.org/pdf/2301.13688.pdf) ||

| 2023-02 | LLaMA|Meta|[LLaMA: Open and Efficient Foundation Language Models](https://research.facebook.com/publications/llama-open-and-efficient-foundation-language-models/)||

| 2023-02 | Kosmos-1|Microsoft|[Language Is Not All You Need: Aligning Perception with Language Models](https://arxiv.org/abs/2302.14045)||

| 2023-03 | PaLM-E | Google | [PaLM-E: An Embodied Multimodal Language Model](https://palm-e.github.io)||

| 2023-03 | GPT 4 | OpenAI | [GPT-4 Technical Report](https://openai.com/research/gpt-4)||

| 2023-04 | Pythia | EleutherAI et al. | [Pythia: A Suite for Analyzing Large Language Models Across Training and Scaling](https://arxiv.org/abs/2304.01373)|ICML<br>|

| 2023-05 | Dromedary | CMU et al. | [Principle-Driven Self-Alignment of Language Models from Scratch with Minimal Human Supervision](https://arxiv.org/abs/2305.03047)||

| 2023-05 | PaLM 2 | Google | [PaLM 2 Technical Report](https://ai.google/static/documents/palm2techreport.pdf)||

| 2023-05 | RWKV | Bo Peng | [RWKV: Reinventing RNNs for the Transformer Era](https://arxiv.org/abs/2305.13048) ||

| 2023-05 | DPO | Stanford | [Direct Preference Optimization: Your Language Model is Secretly a Reward Model](https://arxiv.org/pdf/2305.18290.pdf) ||

| 2023-07 | LLaMA 2 | Meta | [Llama 2: Open Foundation and Fine-Tuned Chat Models](https://arxiv.org/pdf/2307.09288.pdf) ||

: Papers, 2017--* {#tbl-papers .striped .hover}

:::

</details>

::: footer

1. [{{< fa brands github >}} Hannibal046/Awesome-LLM](https://github.com/Hannibal046/Awesome-LLM/blob/main/README.md) [[](https://awesome.re)]{.inline-image}

:::

# Life-Cycle of the LLM {auto-animate=true}

::: {layout="[ 45, 55 ]" layout-valign=center}

::: {#column-one}

1. Data collection + preprocessing

2. **Pre-training**

- Architecture decisions:

`{model_size, hyperparameters,`

`parallelism, lr_schedule, ...}`

3. Supervised Fine-Tuning

- Instruction Tuning

- Alignment

4. Deploy (+ monitor, re-evaluate, etc.)

:::

::: {#column-two}

::: {#fig-pretrain-two}

**Pre-training**: Virtually all of the compute used during pretraining phase[^il-transf].

:::

:::

[^il-transf]: Figure from [The Illustrated Transformer](http://jalammar.github.io/illustrated-transformer/)

:::

# Life-Cycle of the LLM: Pre-training {auto-animate=true}

::: {#fig-pretrain-two}

**Pre-training**: Virtually all of the compute used during pretraining phase

:::

# Life-Cycle of the LLM: Fine-Tuning {auto-animate=true style="font-size: 0.8em;"}

::: {#fig-pretrain-two}

**Fine-tuning**[^ill-transf1]: Fine-tuning actually updates the model's weights to make the model better at a certain task.

:::

[^ill-transf1]: Figure from [The Illustrated Transformer](http://jalammar.github.io/illustrated-transformer/)

# Transformer Architecture {.centeredslide height="100%" style="height:100%; font-size: 0.8em;"}

@vaswani2017attention

# Forward Pass

::: {#fig-forward-pass}

<video data-autoplay src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/blog/assisted-generation/gif_1_1080p.mov"></video>

Language Model trained for causal language modeling. Video from: [🤗 Generation with LLMs](https://huggingface.co/docs/transformers/main/en/llm_tutorial)

:::

# Generating Text

::: {#fig-generating-text}

<video data-autoplay src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/blog/assisted-generation/gif_2_1080p.mov"></video>

Language Model trained for causal language modeling. Video from: [🤗 Generation with LLMs](https://huggingface.co/docs/transformers/main/en/llm_tutorial)

:::

# Parallelism Overview

> _**Modern parallelism techniques** enable the training of large language models_

# Parallelism Concepts[^hf-mp] {style="font-size: 0.9em;"}

- **DataParallel (DP)**:

- The same setup is replicated multiple times, and each being fed a slice of

the data.

- The processing is done in parallel and all setups are synchronized at the

end of each training step.

- **TensorParallel (TP)**:

- Each tensor is split up into multiple chunks.

- So, instead of having the whole tensor reside on a single gpu, each shard

of the tensor resides on its designated gpu.

- During processing each shard gets processed separately and in parallel

on different GPUs and the results are synced at the end of the step.

- This is what one may call horizontal parallelism, as he splitting

happens on horizontal level.

[^hf-mp]: [🤗 Model Parallelism](https://huggingface.co/docs/transformers/v4.15.0/parallelism)

# Parallelism Concepts[^hf-mp1] {style="font-size: 0.9em;"}

- **PipelineParallel (PP)**:

- Model is split up vertically (layer-level) across multiple GPUs, so that

only one or several layers of the model are places on a single gpu.

- Each gpu processes in parallel different stages of the pipeline and

working on a small chunk of the batch.

- **Zero Redundancy Optimizer (ZeRO)**:

- Also performs sharding of the tensors somewhat similar to TP, except the

whole tensor gets reconstructed in time for a forward or backward

computation, therefore the model doesn’t need to be modified.

- It also supports various offloading techniques to compensate for limited

GPU memory.

- **Sharded DDP**:

- Another name for the foundational ZeRO concept as used by various other

implementations of ZeRO.

[^hf-mp1]: [🤗 Model Parallelism](https://huggingface.co/docs/transformers/v4.15.0/parallelism)

# Data Parallelism {style="font-size: 0.9em;"}

- **Data Parallelism**:

- The simplest and most common parallelism technique.

Workers maintain _identical copies_ of the _complete_ model and work on a

_subset of the data_.

- `DDP` supported in PyTorch native.

- ZeRO Data Parallel

- ZeRO powered data parallelism is shown below[^zero-dp]

::: {style="text-align: center;"}

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/parallelism-zero.png" width="75%" />

:::

[^zero-dp]: [Blog Post](https://www.microsoft.com/en-us/research/blog/zero-deepspeed-new-system-optimizations-enable-training-models-with-over-100-billion-parameters/)

# Tensor Parallelism[^efficient-large-scale]

- In **Tensor Paralleism** each GPU processes only a slice of a tensor and only aggregates the full tensor for operations that require the whole thing.

- The main building block of any transformer is a fully connected nn.Linear followed by a nonlinear activation GeLU.

- `Y = GeLU(XA)`, where X and Y are the input and output vectors, and A is the weight matrix.

- If we look at the computation in matrix form, it’s easy to see how the matrix multiplication can be split between multiple GPUs:

[^efficient-large-scale]: [Efficient Large-Scale Language Model Training on GPU Clusters](https://arxiv.org/abs/2104.04473)

# Tensor Parallelism {style="font-size: 0.9em;"}

::: {style="text-align: center;"}

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/parallelism-tp-parallel_gemm.png" width="66%" style="text-align: center;" />

:::

::: footer

This information is based on (the much more in-depth) [TP

Overview](https://github.com/huggingface/transformers/issues/10321#issuecomment-783543530)

by [\@anton-l](https://github.com/anton-l)

:::

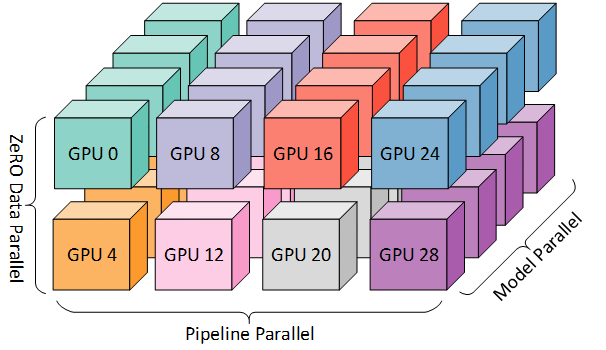

# 3D Parallelism {style="font-size:0.9em;"}

- `DP` + `TP` + `PP` (3D) Parallelism

::: {#3dparallel-1 style="text-align:center!important; width:90%;"}

3D Parallelism illustration. Figure from: [https://www.deepspeed.ai/](https://www.deepspeed.ai/)

:::

# 3D Parallelism

- `DP` + `TP` + `PP` (3D) Parallelism

::: {#3dparallel style="text-align:center!important;"}

Figure taken from [3D parallelism: Scaling to trillion-parameter

models](https://www.microsoft.com/en-us/research/blog/deepspeed-extreme-scale-model-training-for-everyone/)

:::

# Running on ALCF {style="font-size: 0.8em; width:100%;"}

- We've provided a virtual environment complete with all dependencies for

running

[{{< fa brands github >}} `argonne-lcf/Megatron-DeepSpeed`](https://github.com/argonne-lcf/Megatron-DeepSpeed)

```bash

# navigate to directory ---------------------------------------

WORKSHOP_DIR="/lus/grand/projects/fallwkshp23/"

PROJECTS_DIR="${WORKSHOP_DIR}/foremans/projects"

PROJECT_DIR="${PROJECTS_DIR}/argonne-lcf/Megatron-DeepSpeed"

cd "${PROJECT_DIR}"

# load conda module and activate venv -------------------------

module load conda/2023-10-04; conda activate base

source venvs/polaris/2023-10-04/bin/activate

# set runtime environment variables ---------------------------

export IBV_FORK_SAFE=1

export CUDA_DEVICE_MAX_CONNECTIONS=1

# set environment variables for running -----------------------

SEQ_LEN=1024

MICRO_BATCH=1

SP_TYPE="megatron"

MODEL_SIZE_KEY="GPT1_5B"

# launch training --------------------------------------------

./ALCF/train-gpt3.sh

```

# Running on ALCF {style="font-size: 0.775em;"}

- Executable:

```bash

MODEL_SIZE_KEY="GPT1_5B" SEQ_LEN=1024 MICRO_BATCH=1 SP_TYPE="megatron" ./ALCF/train-gpt3.sh

```

<details open><summary><b>Output</b></summary>

```bash

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

ALCF_DIR: /lus/grand/projects/fallwkshp23/foremans/locations/polaris/projects/argonne-lcf/Megatron-DeepSpeed/ALCF

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

source-ing /lus/grand/projects/fallwkshp23/foremans/locations/polaris/projects/argonne-lcf/Megatron-DeepSpeed/ALCF/setup.sh

Setting up MPI on Polaris from x3210c0s1b0n0

++ SetupMPI() +++++++++++++++++++++++++++++++++

Using HOSTFILE: /var/spool/pbs/aux/1126584.polaris-pbs-01.hsn.cm.polaris.alcf.anl.gov

NHOSTS: 2

NGPU_PER_HOST: 4

NGPUS: 8

+++++++++++++++++++++++++++++++++++++++++++++++

Skipping setupThetaGPU() on x3210c0s1b0n0

Setting up MPI on Polaris from x3210c0s1b0n0

USING PYTHON: /lus/grand/projects/fallwkshp23/foremans/locations/polaris/projects/argonne-lcf/Megatron-DeepSpeed/venvs/polaris/2023-10-04/bin/python3

[...]

```

</details>

# Running on ALCF {style="font-size: 0.8em;"}

Once the text has _finally_ stopped printing, you should see output similar to

the following:

::: {.code style="font-size:0.8em;"}

```bash

Job started at: 2023-10-11-092906 on x3210c0s1b0n0

[...]

Writing logs to: /lus/grand/projects/fallwkshp23/foremans/locations/polaris/projects/argonne-lcf/Megatron-DeepSpeed/outputs/gpt_SP_actCkpt_GPT13B_z1_seqlen1024_mp8_pp1_sp1_nl40_hs5120_gb1_mb1

to view output: tail -f $(tail -1 logfiles)

i.e. tail -f /lus/grand/projects/fallwkshp23/foremans/locations/polaris/projects/argonne-lcf/Megatron-DeepSpeed/outputs/gpt_SP_actCkpt_GPT13B_z1_seqlen1024_mp8_pp1_sp1_nl40_hs5120_gb1_mb1/logs/foremans-x3210c0s1b0n0-nhosts2-ngpu8-2023-10-11-092906.log

```

:::

- To watch / view the output:

```bash

tail -fn 1000 $(tail -1 logfiles) | less

```

- will look like[^wbrun]:

::: {.code style="font-size:0.8em;"}

```bash

Job started at: 2023-10-11-092906 on x3210c0s1b0n0

Training GPT-3 with GPT13B parameters

Writing logs to: /lus/grand/projects/fallwkshp23/foremans/locations/polaris/projects/argonne-lcf/Megatron-DeepSpeed/outputs/gpt_SP_actCkpt_GPT13B_z1_seqlen1024_mp8_pp1_sp1_nl40_hs5120_gb1_mb1

to view output: tail -f $(tail -1 logfiles)

i.e. tail -f /lus/grand/projects/fallwkshp23/foremans/locations/polaris/projects/argonne-lcf/Megatron-DeepSpeed/outputs/gpt_SP_actCkpt_GPT13B_z1_seqlen1024_mp8_pp1_sp1_nl40_hs5120_gb1_mb1/logs/foremans-x3210c0s1b0n0-nhosts2-ngpu8-2023-10-11-092906.log

using: /lus/grand/projects/fallwkshp23/foremans/locations/polaris/projects/argonne-lcf/Megatron-DeepSpeed/venvs/polaris/2023-10-04/bin/python3

[...]

```

:::

[^wbrun]: [🚀 W&B Run: `soft-wave-264`](https://wandb.ai/l2hmc-qcd/GenSLM-Megatron-DS/runs/1uve3tdk?workspace=user-saforem2)

# Getting Started at ALCF {.scrollable style="font-size: 0.85em;"}

- We provide below the **details** for installing / getting started on ALCF

(Polaris)

- Installation:

1. {{< fa brands github >}} Clone GitHub repo:

```bash

git clone https://github.com/argonne-lcf/Megatron-DeepSpeed

```

2. Load Conda module:

- Polaris:

```bash

if [[ "$(hostname)==x3*" ]]; then

export MACHINE="Polaris"

export CONDA_DATE="2023-10-04"

module load conda/${CONDA_DATE}

conda activate base

fi

```

- ThetaGPU:

```bash

if [[ "$(hostname)==theta*" ]]; then

export MACHINE="ThetaGPU"

export CONDA_DATE="2023-01-10"

module load conda/${CONDA_DATE}

conda activate base

fi

```

# Getting Started {style="font-size: 0.9em;"}

3. Setup virtual environment[^venv]:

```bash

cd Megatron-DeepSpeed

# create a new virtual environment

mkdir -p "venvs/${MACHINE}/${CONDA_DATE}"

python3 -m venv "venvs/${MACHINE}/${CONDA_DATE}" --system-site-packages

source "venvs/${MACHINE}/${CONDA_DATE}/bin/activate"

```

4. Create a new folder where we'll install dependencies:

```bash

mkdir -p "deps/${MACHINE}"

cd "deps/${MACHINE}"

```

[^venv]: **On-top of** the base `conda` environment (`--system-site-packages`)

# Install Dependencies {.centerdedslide style="height:100%; font-size: 0.9em;" auto-animate=true}

::: {.callout-note icon=false title="{{< fa brands python >}} `conda/2023-10-04`" collapse="false" style="text-align: left!important; width:100%!important; border-color: var(--dim-color)!important; background-color: var(--bg-transparent)!important;"}

**Note**: The following instructions _should be_ unnecessary on Polaris.

:::

::: {.panel-tabset style="font-size: 0.8em; width: 100%!important; height: 100%!important;"}

### {{< fa brands github >}} Dao-AILab/flash-attention

- The [new release]() supports three different implementations of

FlashAttention: (`v1.0.4`, `v2.x`, `triton`)

- FlashAttention `v2.x` may have numerical instability issues.

For the best performance, we recommend using FlashAttention + Triton

- [{{< fa brands github >}} `Dao-AILab/flash-attention`](https://github.com/Dao-AILab/flash-attention):

- `v1.0.4`:

```bash

python3 -m pip install flash-attn==1.0.4

```

- `v2.x`:

```bash

git clone https://github.com/Dao-AILab/flash-attention

cd flash-attention

python3 setup.py install

```

- `openai/triton`:

```bash

git clone -b legacy-backend https://github.com/openai/triton

cd triton/python

python3 -m pip install cmake pybind11

python3 -m pip install .

```

### {{< fa brands github >}} saforem2/ezpz

::: {#ezpz}

- [{{< fa brands github >}} `saforem2/ezpz`](https://github.com/saforem2/ezpz)

```bash

python3 -m pip install -e "git+https://github.com/saforem2/ezpz.git#egg=ezpz"

```

:::

### {{< fa brands github >}} NVIDIA/apex

::: {layout-ncol=2 layout-valign="top"}

::: {#column-one}

- [{{< fa brands github >}} `NVIDIA/apex`](https://github.com/NVIDIA/apex)

```bash

git clone https://github.com/NVIDIA/apex

cd ../apex/

pip install -v \

--disable-pip-version-check \

--no-cache-dir \

--no-build-isolation \

--global-option="--cpp_ext" \

--global-option="--cuda_ext" \

-e \

./

```

:::

::: {.callout-important icon=false title="{{< fa brands python >}} `conda/2023-10-04`" collapse="false" style="text-align: left!important; width:100%!important; border-color: var(--dim-color)!important; background-color: var(--bg-transparent)!important;"}

**Note**: `apex` is **already installed** in the base `conda/2023-10-04` environment on Polaris.

:::

:::

:::

# Running

- The [{{< fa brands github >}}

`ALCF/`](https://github.com/argonne-lcf/Megatron-DeepSpeed/tree/main/ALCF)

directory contains shell scripts for setting up the environment and

specifying options to be used for training.

::: {layout="[ 30, -2, 45 ]" layout-valign="top"}

::: {#column-two style="font-size:1.0em!important; line-height: 1.2em!important; font-family: monospace;"}

::: {style="line-height: 1.1em;"}

- {{< fa solid folder-open >}} [`ALCF/`](https://github.com/argonne-lcf/Megatron-DeepSpeed/tree/main/ALCF)

`├──` [`args.sh`](https://github.com/argonne-lcf/Megatron-DeepSpeed/blob/main/ALCF/models.sh)

`├──` [`launch.sh`](https://github.com/argonne-lcf/Megatron-DeepSpeed/blob/main/ALCF/launch.sh)

`├──` [`model.sh`](https://github.com/argonne-lcf/Megatron-DeepSpeed/blob/main/ALCF/model.sh)

`├──` [`setup.sh`](https://github.com/argonne-lcf/Megatron-DeepSpeed/blob/main/ALCF/setup.sh)

`├──` [`submit-pbs.sh`](https://github.com/argonne-lcf/Megatron-DeepSpeed/blob/main/ALCF/submit-pbs.sh)

`├──` [`submit.sh`](https://github.com/argonne-lcf/Megatron-DeepSpeed/blob/main/ALCF/submit.sh)

`└──` [`train-gpt3.sh`](https://github.com/argonne-lcf/Megatron-DeepSpeed/blob/main/ALCF/train-gpt3.sh)

:::

:::

::: {#column-one}

- Various options can be specified dynamically at runtime by setting them in

your environment, e.g.:

```bash

# Set env. vars to use:

MODEL_SIZE_KEY="GPT25B"

SEQ_LEN=1024

USE_FLASH_ATTN=1

MICRO_BATCH=1

GAS=1

SP_TYPE="megatron"

ZERO_STAGE=1

# Launch training:

./ALCF/train-gpt3.sh

```

:::

:::

# Details {style="font-size: 0.9em;"}

Explicitly:

- [{{< fa brands github >}} `ALCF/train-gpt3.sh`](https://github.com/argonne-lcf/Megatron-DeepSpeed/blob/main/ALCF/train-gpt3.sh):

**Main entry point for training**. This script will:

- Source the rest of the required [`ALCF/*.sh`](https://github.com/argonne-lcf/Megatron-DeepSpeed/blob/main/ALCF/) scripts below

- [{{< fa brands github >}} `ALCF/models.sh`](https://github.com/argonne-lcf/Megatron-DeepSpeed/blob/main/ALCF/models.sh): Contains some example model architectures for GPT3-style models

- [{{< fa brands github >}} `ALCF/args.sh`](https://github.com/argonne-lcf/Megatron-DeepSpeed/blob/main/ALCF/args.sh): Logic for parsing / setting up runtime options for Megatron and DeepSpeed

- [{{< fa brands github >}} `ALCF/setup.sh`](https://github.com/argonne-lcf/Megatron-DeepSpeed/blob/main/ALCF/args.sh): Locate and activate virtual environment to be used, ensure MPI variables are set properly

- [{{< fa brands github >}} `ALCF/launch.sh`](https://github.com/argonne-lcf/Megatron-DeepSpeed/blob/main/ALCF/launch.sh): Identify available resources and build the command to be executed

- i.e. figure out how many: `{nodes, GPUs per node, GPUs total}`, to pass to `mpi{run,exec}`

- then, use this to launch `mpiexec <mpiexec-args> python3`

[`pretrain_gpt.py`](https://github.com/argonne-lcf/Megatron-DeepSpeed/blob/main/ALCF/pretrain_gpt.py`)

`<gpt-args>`

# [DeepSpeed4Science](https://deepspeed4science.ai/) {background-color="#000000" height="100%" style="height: 100%!important; font-size: 0.9em;"}

- [Long Sequence Support for GenSLM Model](https://deepspeed4science.ai/2023/09/18/model-showcase-genslms/)

::: {#ds4sci-logo style="text-align: center;"}

{width="80%" align="center"}

:::

::: {#genslm style="text-align: center; font-size: 0.8em;"}

<img src="https://deepspeed4science.ai/wp-content/uploads/2023/09/Figure-8.gif" width="75%" align="center" />

Latent space of biologically meaningful properties for SARS-CoV-2 genomes

:::

# Loooooooooong Sequence Lengths {style="height:100%; font-size:0.9em;" auto-animate=true}

{width="100%"}

| Sequence Length | Old Megatron-DeepSpeed (TFLOPS) | New Megatron-DeepSpeed (TFLOPS) |

|:---------------:|:--------------------------------:|:--------------------------------:|

| 2k | [25]{style="text-weight:600;"} | [68]{style="text-weight:600;"} |

| 4k | [28]{style="text-weight:600;"} | [80]{style="text-weight:600;"} |

| 8k | [OOM]{.red-text} | [86]{style="text-weight:600;"} |

| 16k | [OOM]{.red-text} | [92]{style="text-weight:600;"} |

| 32k | [OOM]{.red-text} | [100]{style="text-weight:600;"} |

| 64k | [OOM]{.red-text} | [106]{style="text-weight:600;"} |

| 128k | [OOM]{.red-text} | [119]{style="text-weight:600;"} |

| 256k | [OOM]{.red-text} | [94]{style="text-weight:600;"} |

: Long sequence length support from [`microsoft/Megatron-DeepSpeed`](https://github.com/microsoft/Megatron-DeepSpeed) {#tbl-results .striped .hover}

# Loooooooooong Sequence Lengths {style="height:100%; font-size:0.8em;" auto-animate=true}

- Working with [{{< fa brands microsoft >}} Microsoft

DeepSpeed](https://github.com/microsoft/DeepSpeed) team to enable longer

sequence lengths (context windows) for LLMs[^long]

- [Release: **DeepSpeed4Science Overview and

Tutorial**](https://www.deepspeed.ai/deepspeed4science/)

::: {#fig-ds4sci layout="[[1], [1,1]]" style="text-align:center;"}

{width="90%"}

{width="49%"}

{width="49%"}

Maximum (achievable) `SEQ_LEN` for both `25B` and `33B` models [$[$WIP$]$]{.red-text}

:::

::: footer

[{{< fa brands github >}} `argonne-lcf/Megatron-DeepSpeed`](https://github.com/argonne-lcf/Megatron-DeepSpeed)

:::

[^long]: The described experiments were performed on 4 NVIDIA DGX A100-40GB

nodes, all using TPSIZE=32[^tpsize], connected through 8 HDR InfiniBand

(200Gb/s per HDR).↩︎

# Loooooooooong Sequence Lengths {.centeredslide style="height:100%; font-size:0.8em;" auto-animate=true}

- We can evaluate the performance of our model by looking at two different

metrics for throughput: `samples_per_sec` and `TFLOPS`.

- Explicitly, we see that we are able to scale up to significantly longer

sequences:

(`420k / 128k ~ 3.3x`) with only a minimal impact on throughput

performance: (`81 / 105 ~ 77%`)[^tflops-scaling].

::: {style="font-size:0.8em;"}

| Name | Sequence Length (k) | (`seq_len / min_seq_len`) | TFLOPS | TFLOPS (% of peak) |

|:------:|:-------------------:|:-----------------------:|:--------:|:------------------:|

| GPT25B | 420 | [**3.28125**]{.blue-text} | 81.77225 | [**77.867**]{.blue-text} |

| GPT25B | 400 | 3.125 | 90.62 | 86.297 |

| GPT25B | 360 | 2.8125 | 81.6325 | 77.7348 |

| GPT25B | 360 | 2.8125 | 82.6824 | 78.7346 |

| GPT25B | 192 | 1.5 | 115.8228 | 110.2927 |

| GPT25B | 128 | 1 | 106.672 | 101.5788 |

| GPT25B | 128 | 1 | 105.014 | 100.00 |

: Impact on TFLOPS as a function of increasing sequence length. Table from: [`throughput/TFLOPS`](https://api.wandb.ai/links/l2hmc-qcd/awklywn7) {#tbl-seqlen .striped .hover}

:::

[^tflops-scaling]: [`throughput/TFLOPS`](https://api.wandb.ai/links/l2hmc-qcd/awklywn7)

# Links

1. [{{< fa brands github >}} Hannibal046/Awesome-LLM](https://github.com/Hannibal046/Awesome-LLM/blob/main/README.md) [[](https://awesome.re)]{.inline-image}

2. [{{< fa brands github >}} Mooler0410/LLMsPracticalGuide](https://github.com/Mooler0410/LLMsPracticalGuide)

3. [Large Language Models (in 2023)](https://docs.google.com/presentation/d/1636wKStYdT_yRPbJNrf8MLKpQghuWGDmyHinHhAKeXY/edit#slide=id.g238b2698243_0_734https://docs.google.com/presentation/d/1636wKStYdT_yRPbJNrf8MLKpQghuWGDmyHinHhAKeXY/edit#slide=id.g238b2698243_0_734)

4. [The Illustrated Transformer](http://jalammar.github.io/illustrated-transformer/)

5. [Generative AI Exists because of the Transformer](https://ig.ft.com/generative-ai/)

6. [GPT in 60 Lines of Numpy](https://jaykmody.com/blog/gpt-from-scratch/)

7. [Better Language Models and their Implications](https://openai.com/research/better-language-models)

8. [{{< fa solid flask-vial >}}]{.green-text} [Progress / Artefacts / Outcomes from 🌸 Bloom BigScience](https://bigscience.notion.site/ebe3760ae1724dcc92f2e6877de0938f?v=2faf85dc00794321be14bc892539dd4f)

::: {.callout-note title="Acknowledgements"}

This research used resources of the Argonne Leadership Computing Facility,

which is a DOE Office of Science User Facility supported under Contract DE-AC02-06CH11357.

:::

# References

::: {#refs}

:::