Colossal-AI: Making big AI models cheaper, easier, and more scalable

Paper | Documentation | Examples | Forum | Blog

- [2023/02] Open source solution replicates ChatGPT training process! Ready to go with only 1.6GB GPU memory

- [2023/01] Hardware Savings Up to 46 Times for AIGC and Automatic Parallelism

- [2022/11] Diffusion Pretraining and Hardware Fine-Tuning Can Be Almost 7X Cheaper

- [2022/10] Use a Laptop to Analyze 90% of Proteins, With a Single-GPU Inference Sequence Exceeding 10,000

- [2022/09] HPC-AI Tech Completes $6 Million Seed and Angel Round Fundraising

- Why Colossal-AI

- Features

- Parallel Training Demo

- Single GPU Training Demo

- Inference (Energon-AI) Demo

- Colossal-AI for Real World Applications

- Installation

- Use Docker

- Community

- Contributing

- Cite Us

Prof. James Demmel (UC Berkeley): Colossal-AI makes training AI models efficient, easy, and scalable.

Colossal-AI provides a collection of parallel components for you. We aim to support you to write your distributed deep learning models just like how you write your model on your laptop. We provide user-friendly tools to kickstart distributed training and inference in a few lines.

-

Parallelism strategies

- Data Parallelism

- Pipeline Parallelism

- 1D, 2D, 2.5D, 3D Tensor Parallelism

- Sequence Parallelism

- Zero Redundancy Optimizer (ZeRO)

- Auto-Parallelism

-

Heterogeneous Memory Management

-

Friendly Usage

- Parallelism based on configuration file

-

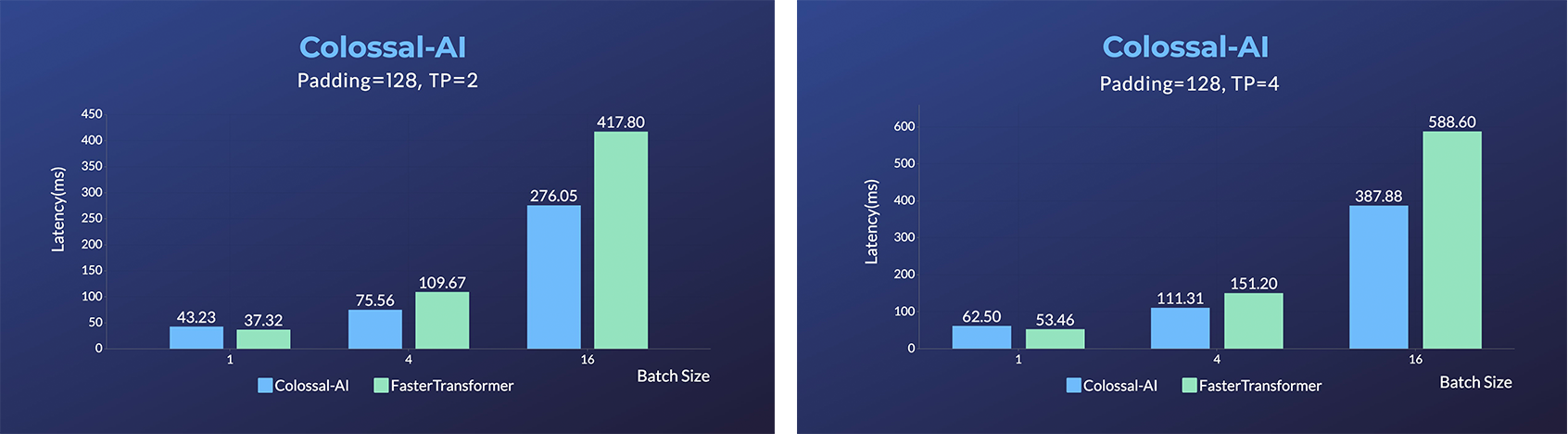

Inference

- Save 50% GPU resources, and 10.7% acceleration

- 11x lower GPU memory consumption, and superlinear scaling efficiency with Tensor Parallelism

- 24x larger model size on the same hardware

- over 3x acceleration

- 2x faster training, or 50% longer sequence length

- PaLM-colossalai: Scalable implementation of Google's Pathways Language Model (PaLM).

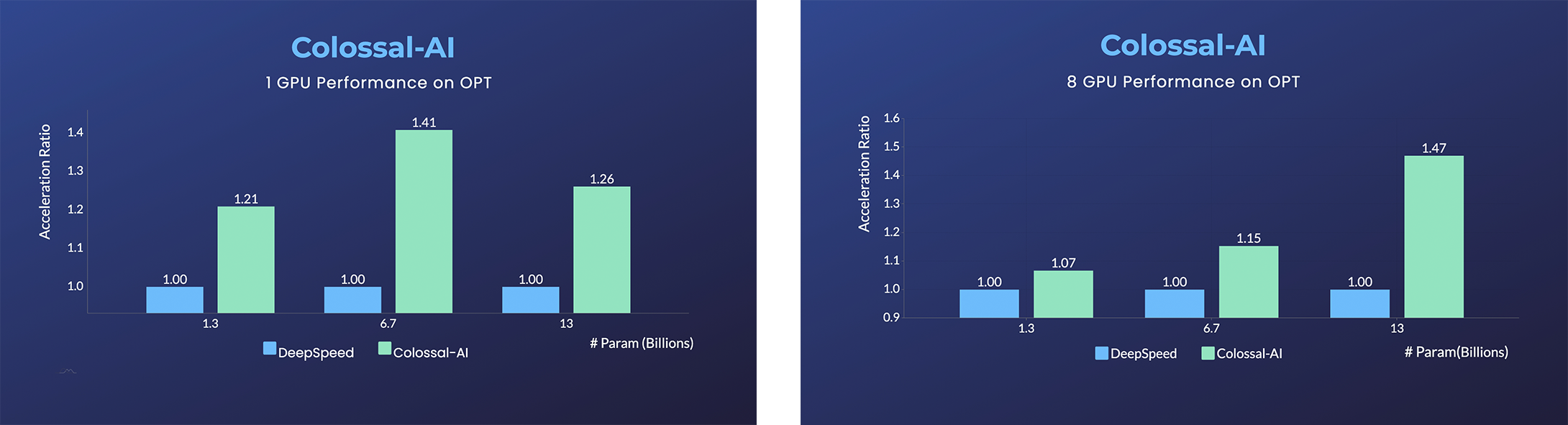

- Open Pretrained Transformer (OPT), a 175-Billion parameter AI language model released by Meta, which stimulates AI programmers to perform various downstream tasks and application deployments because public pretrained model weights.

- 45% speedup fine-tuning OPT at low cost in lines. [Example] [Online Serving]

Please visit our documentation and examples for more details.

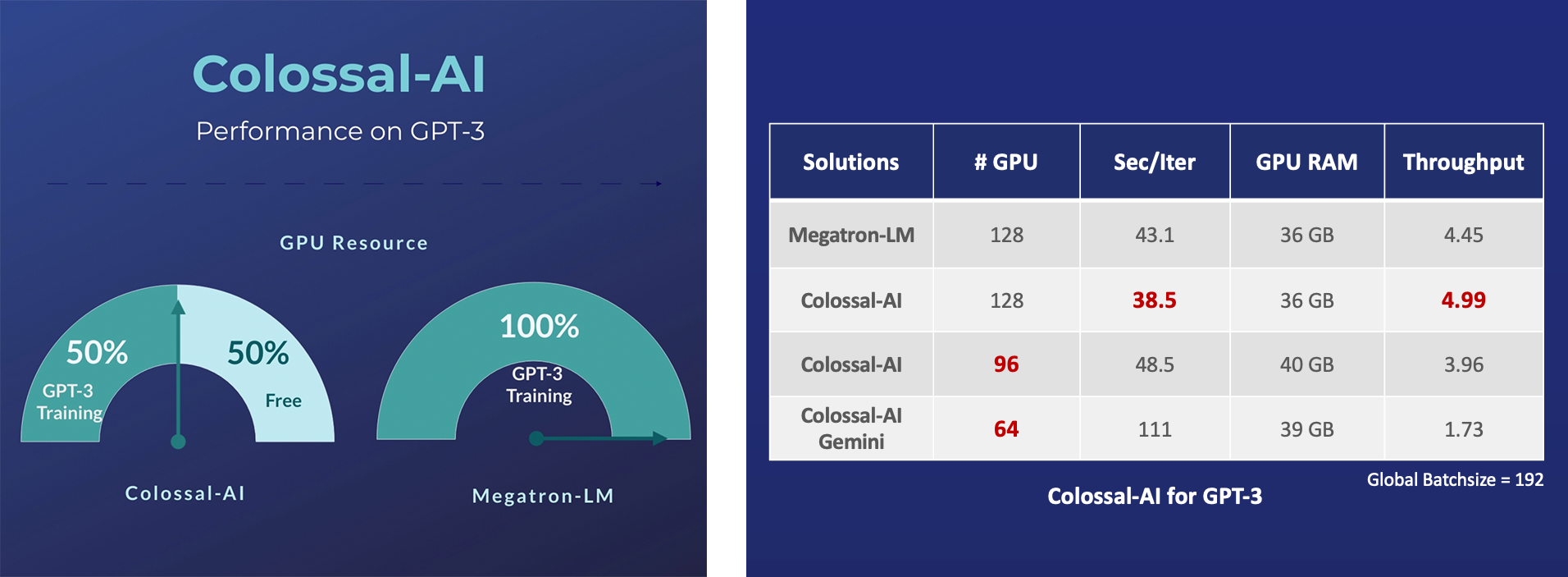

- 14x larger batch size, and 5x faster training for Tensor Parallelism = 64

- Cached Embedding, utilize software cache to train larger embedding tables with a smaller GPU memory budget.

- 20x larger model size on the same hardware

- 120x larger model size on the same hardware (RTX 3080)

- 34x larger model size on the same hardware

- Energon-AI: 50% inference acceleration on the same hardware

- OPT Serving: Try 175-billion-parameter OPT online services

- BLOOM: Reduce hardware deployment costs of 176-billion-parameter BLOOM by more than 10 times.

A low-cost ChatGPT equivalent implementation process. [code] [blog]

- Up to 7.73 times faster for single server training and 1.42 times faster for single-GPU inference

- Up to 10.3x growth in model capacity on one GPU

- A mini demo training process requires only 1.62GB of GPU memory (any consumer-grade GPU)

- Increase the capacity of the fine-tuning model by up to 3.7 times on a single GPU

- Keep in a sufficiently high running speed

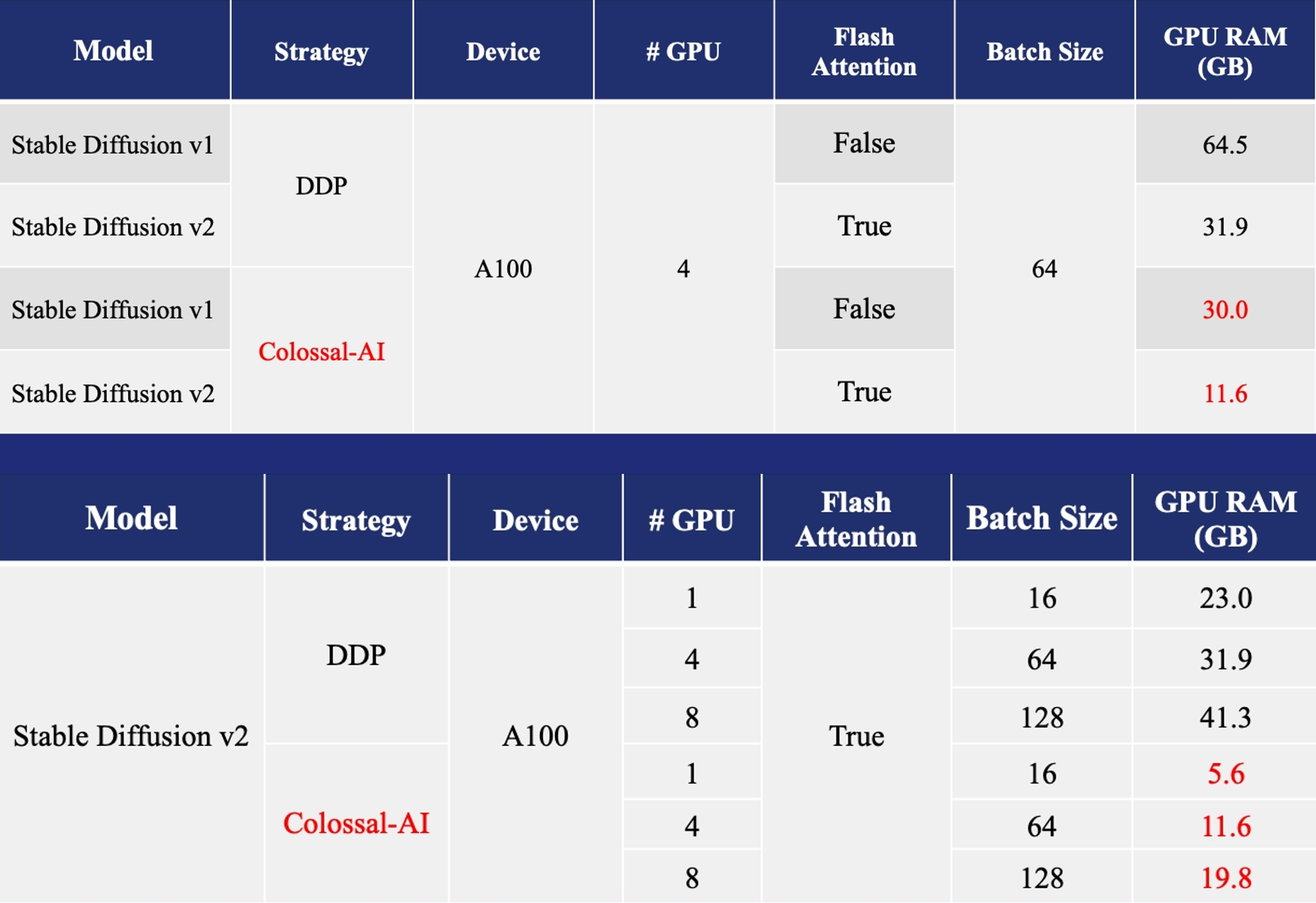

Acceleration of AIGC (AI-Generated Content) models such as Stable Diffusion v1 and Stable Diffusion v2.

- Training: Reduce Stable Diffusion memory consumption by up to 5.6x and hardware cost by up to 46x (from A100 to RTX3060).

- DreamBooth Fine-tuning: Personalize your model using just 3-5 images of the desired subject.

- Inference: Reduce inference GPU memory consumption by 2.5x.

Acceleration of AlphaFold Protein Structure

- FastFold: accelerating training and inference on GPU Clusters, faster data processing, inference sequence containing more than 10000 residues.

- xTrimoMultimer: accelerating structure prediction of protein monomers and multimer by 11x.

You can easily install Colossal-AI with the following command. By defualt, we do not build PyTorch extensions during installation.

pip install colossalaiHowever, if you want to build the PyTorch extensions during installation, you can set CUDA_EXT=1.

CUDA_EXT=1 pip install colossalaiOtherwise, CUDA kernels will be built during runtime when you actually need it.

We also keep release the nightly version to PyPI on a weekly basis. This allows you to access the unreleased features and bug fixes in the main branch. Installation can be made via

pip install colossalai-nightlyThe version of Colossal-AI will be in line with the main branch of the repository. Feel free to raise an issue if you encounter any problem. :)

git clone https://github.com/hpcaitech/ColossalAI.git

cd ColossalAI

# install colossalai

pip install .By default, we do not compile CUDA/C++ kernels. ColossalAI will build them during runtime. If you want to install and enable CUDA kernel fusion (compulsory installation when using fused optimizer):

CUDA_EXT=1 pip install .You can directly pull the docker image from our DockerHub page. The image is automatically uploaded upon release.

Run the following command to build a docker image from Dockerfile provided.

Building Colossal-AI from scratch requires GPU support, you need to use Nvidia Docker Runtime as the default when doing

docker build. More details can be found here. We recommend you install Colossal-AI from our project page directly.

cd ColossalAI

docker build -t colossalai ./dockerRun the following command to start the docker container in interactive mode.

docker run -ti --gpus all --rm --ipc=host colossalai bashJoin the Colossal-AI community on Forum, Slack, and WeChat to share your suggestions, feedback, and questions with our engineering team.

If you wish to contribute to this project, please follow the guideline in Contributing.

Thanks so much to all of our amazing contributors!

The order of contributor avatars is randomly shuffled.

We leverage the power of GitHub Actions to automate our development, release and deployment workflows. Please check out this documentation on how the automated workflows are operated.

@article{bian2021colossal,

title={Colossal-AI: A Unified Deep Learning System For Large-Scale Parallel Training},

author={Bian, Zhengda and Liu, Hongxin and Wang, Boxiang and Huang, Haichen and Li, Yongbin and Wang, Chuanrui and Cui, Fan and You, Yang},

journal={arXiv preprint arXiv:2110.14883},

year={2021}

}

Colossal-AI has been accepted as official tutorials by top conference SC, AAAI, PPoPP, CVPR, etc.

GPT-2.png)