Boost.Redis is a high-level Redis client library built on top of Boost.Asio that implements the Redis protocol RESP3. The requirements for using Boost.Redis are:

- Boost. The library is included in Boost distributions starting with 1.84.

- C++17 or higher.

- Redis 6 or higher (must support RESP3).

- Gcc (11, 12), Clang (11, 13, 14) and Visual Studio (16 2019, 17 2022).

- Have basic-level knowledge about Redis and Boost.Asio.

The latest release can be downloaded on

https://github.com/boostorg/redis/releases. The library headers can be

found in the include subdirectory and a compilation of the source

#include <boost/redis/src.hpp>is required. The simplest way to do it is to included this header in no more than one source file in your applications. To build the examples and tests cmake is supported, for example

# Linux

$ BOOST_ROOT=/opt/boost_1_84_0 cmake --preset g++-11

# Windows

$ cmake -G "Visual Studio 17 2022" -A x64 -B bin64 -DCMAKE_TOOLCHAIN_FILE=C:/vcpkg/scripts/buildsystems/vcpkg.cmakeLet us start with a simple application that uses a short-lived connection to send a ping command to Redis

auto co_main(config const& cfg) -> net::awaitable<void>

{

auto conn = std::make_shared<connection>(co_await net::this_coro::executor);

conn->async_run(cfg, {}, net::consign(net::detached, conn));

// A request containing only a ping command.

request req;

req.push("PING", "Hello world");

// Response where the PONG response will be stored.

response<std::string> resp;

// Executes the request.

co_await conn->async_exec(req, resp, net::deferred);

conn->cancel();

std::cout << "PING: " << std::get<0>(resp).value() << std::endl;

}The roles played by the async_run and async_exec functions are

async_exec: Execute the commands contained in the request and store the individual responses in therespobject. Can be called from multiple places in your code concurrently.async_run: Resolve, connect, ssl-handshake, resp3-handshake, health-checks, reconnection and coordinate low-level read and write operations (among other things).

Redis servers can also send a variety of pushes to the client, some of them are

The connection class supports server pushes by means of the

boost::redis::connection::async_receive function, which can be

called in the same connection that is being used to execute commands.

The coroutine below shows how to used it

auto

receiver(std::shared_ptr<connection> conn) -> net::awaitable<void>

{

request req;

req.push("SUBSCRIBE", "channel");

generic_response resp;

conn->set_receive_response(resp);

// Loop while reconnection is enabled

while (conn->will_reconnect()) {

// Reconnect to channels.

co_await conn->async_exec(req, ignore, net::deferred);

// Loop reading Redis pushes.

for (;;) {

error_code ec;

co_await conn->async_receive(resp, net::redirect_error(net::use_awaitable, ec));

if (ec)

break; // Connection lost, break so we can reconnect to channels.

// Use the response resp in some way and then clear it.

...

consume_one(resp);

}

}

}

Redis requests are composed of one or more commands (in the Redis documentation they are called pipelines). For example

// Some example containers.

std::list<std::string> list {...};

std::map<std::string, mystruct> map { ...};

// The request can contain multiple commands.

request req;

// Command with variable length of arguments.

req.push("SET", "key", "some value", "EX", "2");

// Pushes a list.

req.push_range("SUBSCRIBE", list);

// Same as above but as an iterator range.

req.push_range("SUBSCRIBE", std::cbegin(list), std::cend(list));

// Pushes a map.

req.push_range("HSET", "key", map);Sending a request to Redis is performed with boost::redis::connection::async_exec as already stated.

The boost::redis::request::config object inside the request dictates how the

boost::redis::connection should handle the request in some important situations. The

reader is advised to read it carefully.

Boost.Redis uses the following strategy to support Redis responses

boost::redis::requestis used for requests whose number of commands are not dynamic.- Dynamic: Otherwise use

boost::redis::generic_response.

For example, the request below has three commands

request req;

req.push("PING");

req.push("INCR", "key");

req.push("QUIT");and its response also has three comamnds and can be read in the following response object

response<std::string, int, std::string>The response behaves as a tuple and must

have as many elements as the request has commands (exceptions below).

It is also necessary that each tuple element is capable of storing the

response to the command it refers to, otherwise an error will occur.

To ignore responses to individual commands in the request use the tag

boost::redis::ignore_t, for example

// Ignore the second and last responses.

response<std::string, boost::redis::ignore_t, std::string, boost::redis::ignore_t>The following table provides the resp3-types returned by some Redis commands

| Command | RESP3 type | Documentation |

|---|---|---|

| lpush | Number | https://redis.io/commands/lpush |

| lrange | Array | https://redis.io/commands/lrange |

| set | Simple-string, null or blob-string | https://redis.io/commands/set |

| get | Blob-string | https://redis.io/commands/get |

| smembers | Set | https://redis.io/commands/smembers |

| hgetall | Map | https://redis.io/commands/hgetall |

To map these RESP3 types into a C++ data structure use the table below

| RESP3 type | Possible C++ type | Type |

|---|---|---|

| Simple-string | std::string |

Simple |

| Simple-error | std::string |

Simple |

| Blob-string | std::string, std::vector |

Simple |

| Blob-error | std::string, std::vector |

Simple |

| Number | long long, int, std::size_t, std::string |

Simple |

| Double | double, std::string |

Simple |

| Null | std::optional<T> |

Simple |

| Array | std::vector, std::list, std::array, std::deque |

Aggregate |

| Map | std::vector, std::map, std::unordered_map |

Aggregate |

| Set | std::vector, std::set, std::unordered_set |

Aggregate |

| Push | std::vector, std::map, std::unordered_map |

Aggregate |

For example, the response to the request

request req;

req.push("HELLO", 3);

req.push_range("RPUSH", "key1", vec);

req.push_range("HSET", "key2", map);

req.push("LRANGE", "key3", 0, -1);

req.push("HGETALL", "key4");

req.push("QUIT");

can be read in the tuple below

response<

redis::ignore_t, // hello

int, // rpush

int, // hset

std::vector<T>, // lrange

std::map<U, V>, // hgetall

std::string // quit

> resp;Where both are passed to async_exec as showed elsewhere

co_await conn->async_exec(req, resp, net::deferred);If the intention is to ignore responses altogether use ignore

// Ignores the response

co_await conn->async_exec(req, ignore, net::deferred);Responses that contain nested aggregates or heterogeneous data types will be given special treatment later in The general case. As of this writing, not all RESP3 types are used by the Redis server, which means in practice users will be concerned with a reduced subset of the RESP3 specification.

Commands that have no response like

"SUBSCRIBE""PSUBSCRIBE""UNSUBSCRIBE"

must NOT be included in the response tuple. For example, the request below

request req;

req.push("PING");

req.push("SUBSCRIBE", "channel");

req.push("QUIT");must be read in this tuple response<std::string, std::string>,

that has static size two.

It is not uncommon for apps to access keys that do not exist or

that have already expired in the Redis server, to deal with these

cases Boost.Redis provides support for std::optional. To use it,

wrap your type around std::optional like this

response<

std::optional<A>,

std::optional<B>,

...

> resp;

co_await conn->async_exec(req, resp, net::deferred);Everything else stays pretty much the same.

To read responses to transactions we must first observe that Redis

will queue the transaction commands and send their individual

responses as elements of an array, the array is itself the response to

the EXEC command. For example, to read the response to this request

req.push("MULTI");

req.push("GET", "key1");

req.push("LRANGE", "key2", 0, -1);

req.push("HGETALL", "key3");

req.push("EXEC");use the following response type

using boost::redis::ignore;

using exec_resp_type =

response<

std::optional<std::string>, // get

std::optional<std::vector<std::string>>, // lrange

std::optional<std::map<std::string, std::string>> // hgetall

>;

response<

boost::redis::ignore_t, // multi

boost::redis::ignore_t, // get

boost::redis::ignore_t, // lrange

boost::redis::ignore_t, // hgetall

exec_resp_type, // exec

> resp;

co_await conn->async_exec(req, resp, net::deferred);For a complete example see cpp20_containers.cpp.

There are cases where responses to Redis commands won't fit in the model presented above, some examples are

- Commands (like

set) whose responses don't have a fixed RESP3 type. Expecting anintand receiving a blob-string will result in error. - RESP3 aggregates that contain nested aggregates can't be read in STL containers.

- Transactions with a dynamic number of commands can't be read in a

response.

To deal with these cases Boost.Redis provides the boost::redis::resp3::node type

abstraction, that is the most general form of an element in a

response, be it a simple RESP3 type or the element of an aggregate. It

is defined like this

template <class String>

struct basic_node {

// The RESP3 type of the data in this node.

type data_type;

// The number of elements of an aggregate (or 1 for simple data).

std::size_t aggregate_size;

// The depth of this node in the response tree.

std::size_t depth;

// The actual data. For aggregate types this is always empty.

String value;

};Any response to a Redis command can be received in a

boost::redis::generic_response. The vector can be seen as a

pre-order view of the response tree. Using it is not different than

using other types

// Receives any RESP3 simple or aggregate data type.

boost::redis::generic_response resp;

co_await conn->async_exec(req, resp, net::deferred);For example, suppose we want to retrieve a hash data structure

from Redis with HGETALL, some of the options are

boost::redis::generic_response: Works always.std::vector<std::string>: Efficient and flat, all elements as string.std::map<std::string, std::string>: Efficient if you need the data as astd::map.std::map<U, V>: Efficient if you are storing serialized data. Avoids temporaries and requiresboost_redis_from_bulkforUandV.

In addition to the above users can also use unordered versions of the

containers. The same reasoning applies to sets e.g. SMEMBERS

and other data structures in general.

Boost.Redis supports serialization of user defined types by means of the following customization points

// Serialize.

void boost_redis_to_bulk(std::string& to, mystruct const& obj);

// Deserialize

void boost_redis_from_bulk(mystruct& obj, char const* p, std::size_t size, boost::system::error_code& ec)These functions are accessed over ADL and therefore they must be imported in the global namespace by the user. In the Examples section the reader can find examples showing how to serialize using json and protobuf.

The examples below show how to use the features discussed so far

- cpp20_intro.cpp: Does not use awaitable operators.

- cpp20_intro_tls.cpp: Communicates over TLS.

- cpp20_containers.cpp: Shows how to send and receive STL containers and how to use transactions.

- cpp20_json.cpp: Shows how to serialize types using Boost.Json.

- cpp20_protobuf.cpp: Shows how to serialize types using protobuf.

- cpp20_resolve_with_sentinel.cpp: Shows how to resolve a master address using sentinels.

- cpp20_subscriber.cpp: Shows how to implement pubsub with reconnection re-subscription.

- cpp20_echo_server.cpp: A simple TCP echo server.

- cpp20_chat_room.cpp: A command line chat built on Redis pubsub.

- cpp17_intro.cpp: Uses callbacks and requires C++17.

- cpp17_intro_sync.cpp: Runs

async_runin a separate thread and performs synchronous calls toasync_exec.

The main function used in some async examples has been factored out in the main.cpp file.

This document benchmarks the performance of TCP echo servers I implemented in different languages using different Redis clients. The main motivations for choosing an echo server are

- Simple to implement and does not require expertise level in most languages.

- I/O bound: Echo servers have very low CPU consumption in general and therefore are excelent to measure how a program handles concurrent requests.

- It simulates very well a typical backend in regard to concurrency.

I also imposed some constraints on the implementations

- It should be simple enough and not require writing too much code.

- Favor the use standard idioms and avoid optimizations that require expert level.

- Avoid the use of complex things like connection and thread pool.

To reproduce these results run one of the echo-server programs in one terminal and the echo-server-client in another.

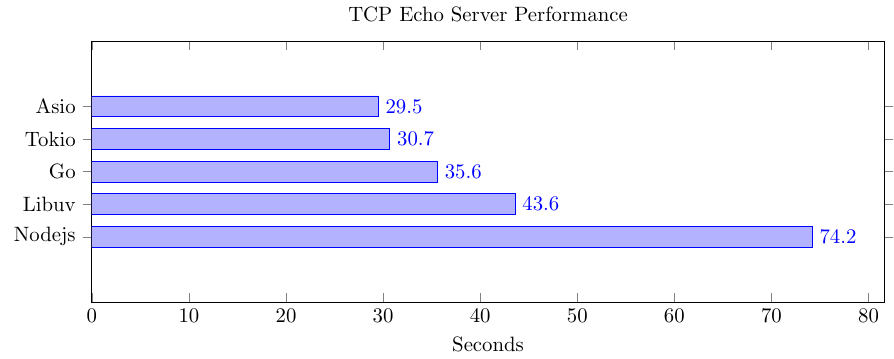

First I tested a pure TCP echo server, i.e. one that sends the messages directly to the client without interacting with Redis. The result can be seen below

The tests were performed with a 1000 concurrent TCP connections on the localhost where latency is 0.07ms on average on my machine. On higher latency networks the difference among libraries is expected to decrease.

- I expected Libuv to have similar performance to Asio and Tokio.

- I did expect nodejs to come a little behind given it is is javascript code. Otherwise I did expect it to have similar performance to libuv since it is the framework behind it.

- Go did surprise me: faster than nodejs and libuv!

The code used in the benchmarks can be found at

- Asio: A variation of this Asio example.

- Libuv: Taken from here Libuv example .

- Tokio: Taken from here.

- Nodejs

- Go

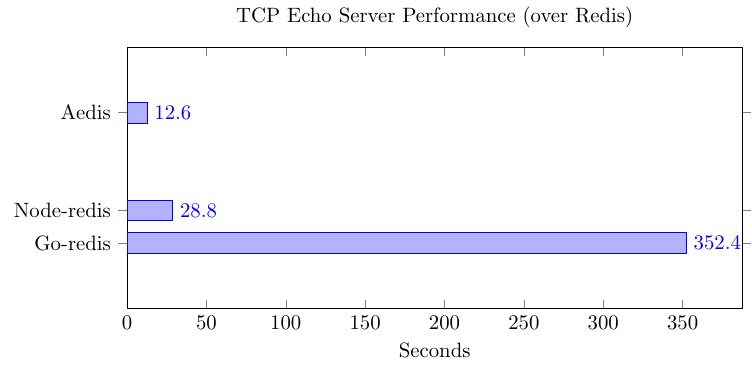

This is similar to the echo server described above but messages are echoed by Redis and not by the echo-server itself, which acts as a proxy between the client and the Redis server. The results can be seen below

The tests were performed on a network where latency is 35ms on average, otherwise it uses the same number of TCP connections as the previous example.

As the reader can see, the Libuv and the Rust test are not depicted in the graph, the reasons are

-

redis-rs: This client comes so far behind that it can't even be represented together with the other benchmarks without making them look insignificant. I don't know for sure why it is so slow, I suppose it has something to do with its lack of automatic pipelining support. In fact, the more TCP connections I lauch the worse its performance gets.

-

Libuv: I left it out because it would require me writing to much c code. More specifically, I would have to use hiredis and implement support for pipelines manually.

The code used in the benchmarks can be found at

Redis clients have to support automatic pipelining to have competitive performance. For updates to this document follow https://github.com/boostorg/redis.

The main reason for why I started writing Boost.Redis was to have a client compatible with the Asio asynchronous model. As I made progresses I could also address what I considered weaknesses in other libraries. Due to time constraints I won't be able to give a detailed comparison with each client listed in the official list, instead I will focus on the most popular C++ client on github in number of stars, namely

Before we start it is important to mention some of the things redis-plus-plus does not support

- The latest version of the communication protocol RESP3. Without that it is impossible to support some important Redis features like client side caching, among other things.

- Coroutines.

- Reading responses directly in user data structures to avoid creating temporaries.

- Error handling with support for error-code.

- Cancellation.

The remaining points will be addressed individually. Let us first have a look at what sending a command a pipeline and a transaction look like

auto redis = Redis("tcp://127.0.0.1:6379");

// Send commands

redis.set("key", "val");

auto val = redis.get("key"); // val is of type OptionalString.

if (val)

std::cout << *val << std::endl;

// Sending pipelines

auto pipe = redis.pipeline();

auto pipe_replies = pipe.set("key", "value")

.get("key")

.rename("key", "new-key")

.rpush("list", {"a", "b", "c"})

.lrange("list", 0, -1)

.exec();

// Parse reply with reply type and index.

auto set_cmd_result = pipe_replies.get<bool>(0);

// ...

// Sending a transaction

auto tx = redis.transaction();

auto tx_replies = tx.incr("num0")

.incr("num1")

.mget({"num0", "num1"})

.exec();

auto incr_result0 = tx_replies.get<long long>(0);

// ...Some of the problems with this API are

- Heterogeneous treatment of commands, pipelines and transaction. This makes auto-pipelining impossible.

- Any Api that sends individual commands has a very restricted scope of usability and should be avoided for performance reasons.

- The API imposes exceptions on users, no error-code overload is provided.

- No way to reuse the buffer for new calls to e.g. redis.get in order to avoid further dynamic memory allocations.

- Error handling of resolve and connection not clear.

According to the documentation, pipelines in redis-plus-plus have the following characteristics

NOTE: By default, creating a Pipeline object is NOT cheap, since it creates a new connection.

This is clearly a downside in the API as pipelines should be the default way of communicating and not an exception, paying such a high price for each pipeline imposes a severe cost in performance. Transactions also suffer from the very same problem.

NOTE: Creating a Transaction object is NOT cheap, since it creates a new connection.

In Boost.Redis there is no difference between sending one command, a pipeline or a transaction because requests are decoupled from the IO objects.

redis-plus-plus also supports async interface, however, async support for Transaction and Subscriber is still on the way.

The async interface depends on third-party event library, and so far, only libuv is supported.

Async code in redis-plus-plus looks like the following

auto async_redis = AsyncRedis(opts, pool_opts);

Future<string> ping_res = async_redis.ping();

cout << ping_res.get() << endl;As the reader can see, the async interface is based on futures which is also known to have a bad performance. The biggest problem however with this async design is that it makes it impossible to write asynchronous programs correctly since it starts an async operation on every command sent instead of enqueueing a message and triggering a write when it can be sent. It is also not clear how are pipelines realised with this design (if at all).

The High-Level page documents all public types.

Acknowledgement to people that helped shape Boost.Redis

- Richard Hodges (madmongo1): For very helpful support with Asio, the design of asynchronous programs, etc.

- Vinícius dos Santos Oliveira (vinipsmaker): For useful discussion about how Boost.Redis consumes buffers in the read operation.

- Petr Dannhofer (Eddie-cz): For helping me understand how the

AUTHandHELLOcommand can influence each other. - Mohammad Nejati (ashtum): For pointing out scenarios where calls to

async_execshould fail when the connection is lost. - Klemens Morgenstern (klemens-morgenstern): For useful discussion about timeouts, cancellation, synchronous interfaces and general help with Asio.

- Vinnie Falco (vinniefalco): For general suggestions about how to improve the code and the documentation.

- Bram Veldhoen (bveldhoen): For contributing a Redis-streams example.

Also many thanks to all individuals that participated in the Boost review

- Zach Laine: https://lists.boost.org/Archives/boost/2023/01/253883.php

- Vinnie Falco: https://lists.boost.org/Archives/boost/2023/01/253886.php

- Christian Mazakas: https://lists.boost.org/Archives/boost/2023/01/253900.php

- Ruben Perez: https://lists.boost.org/Archives/boost/2023/01/253915.php

- Dmitry Arkhipov: https://lists.boost.org/Archives/boost/2023/01/253925.php

- Alan de Freitas: https://lists.boost.org/Archives/boost/2023/01/253927.php

- Mohammad Nejati: https://lists.boost.org/Archives/boost/2023/01/253929.php

- Sam Hartsfield: https://lists.boost.org/Archives/boost/2023/01/253931.php

- Miguel Portilla: https://lists.boost.org/Archives/boost/2023/01/253935.php

- Robert A.H. Leahy: https://lists.boost.org/Archives/boost/2023/01/253928.php

The Reviews can be found at: https://lists.boost.org/Archives/boost/2023/01/date.php. The thread with the ACCEPT from the review manager can be found here: https://lists.boost.org/Archives/boost/2023/01/253944.php.

-

(Issue 205) Improves reaction time to disconnection by using

wait_for_one_errorinstead ofwait_for_all. The functionconnection::async_runwas also changed to return EOF to the user when that error is received from the server. That is a breaking change. -

(Issue 210) Fixes the adapter of empty nested reposponses.

-

(Issues 211 and 212) Fixes the reconnect loop that would hang under certain conditions, see the linked issues for more details.

-

(Issue 219) Changes the default log level from

disabledtodebug.

-

(Issue 170) Under load and on low-latency networks it is possible to start receiving responses before the write operation completed and while the request is still marked as staged and not written. This messes up with the heuristics that classifies responses as unsolicied or not.

-

(Issue 168). Provides a way of passing a custom SSL context to the connection. The design here differs from that of Boost.Beast and Boost.MySql since in Boost.Redis the connection owns the context instead of only storing a reference to a user provided one. This is ok so because apps need only one connection for their entire application, which makes the overhead of one ssl-context per connection negligible.

-

(Issue 181). See a detailed description of this bug in this comment.

-

(Issue 182). Sets

"default"as the default value ofconfig::username. This makes it simpler to use therequirepassconfiguration in Redis. -

(Issue 189). Fixes narrowing convertion by using

std::size_tinstead ofstd::uint64_tfor the sizes of bulks and aggregates. The code relies now onstd::from_charsreturning an error if a value greater than 32 is received on platforms on which the size ofstd::size_tis 32.

-

Deprecates the

async_receiveoverload that takes a response. Users should now first callset_receive_responseto avoid constantly and unnecessarily setting the same response. -

Uses

std::functionto type erase the response adapter. This change should not influence users in any way but allowed important simplification in the connections internals. This resulted in massive performance improvement. -

The connection has a new member

get_usage()that returns the connection usage information, such as number of bytes written, received etc. -

There are massive performance improvements in the consuming of server pushes which are now communicated with an

asio::channeland therefore can be buffered which avoids blocking the socket read-loop. Batch reads are also supported by means ofchannel.try_sendand buffered messages can be consumed synchronously withconnection::receive. The functionboost::redis::cancel_onehas been added to simplify processing multiple server pushes contained in the samegeneric_response. IMPORTANT: These changes may result in more than one push in the response whenconnection::async_receiveresumes. The user must therefore be careful when callingresp.clear(): either ensure that all message have been processed or just useconsume_one.

-

Adds

boost::redis::config::database_indexto make it possible to choose a database before starting running commands e.g. after an automatic reconnection. -

Massive performance improvement. One of my tests went from 140k req/s to 390k/s. This was possible after a parser simplification that reduced the number of reschedules and buffer rotations.

-

Adds Redis stream example.

-

Renames the project to Boost.Redis and moves the code into namespace

boost::redis. -

As pointed out in the reviews the

to_bulkandfrom_bulknames were too generic for ADL customization points. They gained the prefixboost_redis_. -

Moves

boost::redis::resp3::requesttoboost::redis::request. -

Adds new typedef

boost::redis::responsethat should be used instead ofstd::tuple. -

Adds new typedef

boost::redis::generic_responsethat should be used instead ofstd::vector<resp3::node<std::string>>. -

Renames

redis::ignoretoredis::ignore_t. -

Changes

async_execto receive aredis::responseinstead of an adapter, namely, instead of passingadapt(resp)users should passrespdirectly. -

Introduces

boost::redis::adapter::resultto store responses to commands including possible resp3 errors without losing the error diagnostic part. To access values now usestd::get<N>(resp).value()instead ofstd::get<N>(resp). -

Implements full-duplex communication. Before these changes the connection would wait for a response to arrive before sending the next one. Now requests are continuously coalesced and written to the socket.

request::coalescebecame unnecessary and was removed. I could measure significative performance gains with theses changes. -

Improves serialization examples using Boost.Describe to serialize to JSON and protobuf. See cpp20_json.cpp and cpp20_protobuf.cpp for more details.

-

Upgrades to Boost 1.81.0.

-

Fixes build with libc++.

-

Adds high-level functionality to the connection classes. For example,

boost::redis::connection::async_runwill automatically resolve, connect, reconnect and perform health checks.

- Renames

retry_on_connection_losttocancel_if_unresponded. (v1.4.1) - Removes dependency on Boost.Hana,

boost::string_view, Boost.Variant2 and Boost.Spirit. - Fixes build and setup CI on windows.

-

Upgrades to Boost 1.80.0

-

Removes automatic sending of the

HELLOcommand. This can't be implemented properly without bloating the connection class. It is now a user responsibility to send HELLO. Requests that contain it have priority over other requests and will be moved to the front of the queue, seeaedis::request::config -

Automatic name resolving and connecting have been removed from

aedis::connection::async_run. Users have to do this step manually now. The reason for this change is that having them built-in doesn't offer enough flexibility that is need for boost users. -

Removes healthy checks and idle timeout. This functionality must now be implemented by users, see the examples. This is part of making Aedis useful to a larger audience and suitable for the Boost review process.

-

The

aedis::connectionis now using a typeddef to anet::ip::tcp::socketandaedis::ssl::connectiontonet::ssl::stream<net::ip::tcp::socket>. Users that need to use other stream type must now specializeaedis::basic_connection. -

Adds a low level example of async code.

-

aedis::adaptsupports now tuples created withstd::tie.aedis::ignoreis now an alias to the type ofstd::ignore. -

Provides allocator support for the internal queue used in the

aedis::connectionclass. -

Changes the behaviour of

async_runto complete with success if asio::error::eof is received. This makes it easier to write composed operations with awaitable operators. -

Adds allocator support in the

aedis::request(a contribution from Klemens Morgenstern). -

Renames

aedis::request::push_range2topush_range. The suffix 2 was used for disambiguation. Klemens fixed it with SFINAE. -

Renames

fail_on_connection_losttoaedis::request::config::cancel_on_connection_lost. Now, it will only cause connections to be canceled whenasync_runcompletes. -

Introduces

aedis::request::config::cancel_if_not_connectedwhich will cause a request to be canceled ifasync_execis called before a connection has been established. -

Introduces new request flag

aedis::request::config::retrythat if set to true will cause the request to not be canceled when it was sent to Redis but remained unresponded afterasync_runcompleted. It provides a way to avoid executing commands twice. -

Removes the

aedis::connection::async_runoverload that takes request and adapter as parameters. -

Changes the way

aedis::adapt()behaves withstd::vector<aedis::resp3::node<T>>. Receiving RESP3 simple errors, blob errors or null won't causes an error but will be treated as normal response. It is the user responsibility to check the content in the vector. -

Fixes a bug in

connection::cancel(operation::exec). Now this call will only cancel non-written requests. -

Implements per-operation implicit cancellation support for

aedis::connection::async_exec. The following call willco_await (conn.async_exec(...) || timer.async_wait(...))will cancel the request as long as it has not been written. -

Changes

aedis::connection::async_runcompletion signature tof(error_code). This is how is was in the past, the second parameter was not helpful. -

Renames

operation::receive_pushtoaedis::operation::receive.

-

Removes

coalesce_requestsfrom theaedis::connection::config, it became a request property now, seeaedis::request::config::coalesce. -

Removes

max_read_sizefrom theaedis::connection::config. The maximum read size can be specified now as a parameter of theaedis::adapt()function. -

Removes

aedis::syncclass, see intro_sync.cpp for how to perform synchronous and thread safe calls. This is possible in Boost. 1.80 only as it requiresboost::asio::deferred. -

Moves from

boost::optionaltostd::optional. This is part of moving to C++17. -

Changes the behaviour of the second

aedis::connection::async_runoverload so that it always returns an error when the connection is lost. -

Adds TLS support, see intro_tls.cpp.

-

Adds an example that shows how to resolve addresses over sentinels, see subscriber_sentinel.cpp.

-

Adds a

aedis::connection::timeouts::resp3_handshake_timeout. This is timeout used to send theHELLOcommand. -

Adds

aedis::endpointwhere in addition to host and port, users can optionally provide username, password and the expected server role (seeaedis::error::unexpected_server_role). -

aedis::connection::async_runchecks whether the server role received in the hello command is equal to the expected server role specified inaedis::endpoint. To skip this check let the role variable empty. -

Removes reconnect functionality from

aedis::connection. It is possible in simple reconnection strategies but bloats the class in more complex scenarios, for example, with sentinel, authentication and TLS. This is trivial to implement in a separate coroutine. As a result theenum eventandasync_receive_eventhave been removed from the class too. -

Fixes a bug in

connection::async_receive_pushthat prevented passing any response adapter other thatadapt(std::vector<node>). -

Changes the behaviour of

aedis::adapt()that caused RESP3 errors to be ignored. One consequence of it is thatconnection::async_runwould not exit with failure in servers that required authentication. -

Changes the behaviour of

connection::async_runthat would cause it to complete with success when an error in theconnection::async_execoccurred. -

Ports the buildsystem from autotools to CMake.

-

Adds experimental cmake support for windows users.

-

Adds new class

aedis::syncthat wraps anaedis::connectionin a thread-safe and synchronous API. All free functions from thesync.hppare now member functions ofaedis::sync. -

Split

aedis::connection::async_receive_eventin two functions, one to receive events and another for server side pushes, seeaedis::connection::async_receive_push. -

Removes collision between

aedis::adapter::adaptandaedis::adapt. -

Adds

connection::operationenum to replacecancel_*member functions with a single cancel function that gets the operations that should be cancelled as argument. -

Bugfix: a bug on reconnect from a state where the

connectionobject had unsent commands. It could causeasync_execto never complete under certain conditions. -

Bugfix: Documentation of

adapt()functions were missing from Doxygen.

-

Adds

experimental::execandreceive_eventfunctions to offer a thread safe and synchronous way of executing requests across threads. Seeintro_sync.cppandsubscriber_sync.cppfor examples. -

connection::async_read_pushwas renamed toasync_receive_event. -

connection::async_receive_eventis now being used to communicate internal events to the user, such as resolve, connect, push etc. For examples see cpp20_subscriber.cpp andconnection::event. -

The

aedisdirectory has been moved toincludeto look more similar to Boost libraries. Users should now replace-I/aedis-pathwith-I/aedis-path/includein the compiler flags. -

The

AUTHandHELLOcommands are now sent automatically. This change was necessary to implement reconnection. The username and password used inAUTHshould be provided by the user onconnection::config. -

Adds support for reconnection. See

connection::enable_reconnect. -

Fixes a bug in the

connection::async_run(host, port)overload that was causing crashes on reconnection. -

Fixes the executor usage in the connection class. Before theses changes it was imposing

any_io_executoron users. -

connection::async_receiver_eventis not cancelled anymore whenconnection::async_runexits. This change makes user code simpler. -

connection::async_execwith host and port overload has been removed. Use the otherconnection::async_runoverload. -

The host and port parameters from

connection::async_runhave been move toconnection::configto better support authentication and failover. -

Many simplifications in the

chat_roomexample. -

Fixes build in clang the compilers and makes some improvements in the documentation.

- Fixes a bug that happens on very high load. (v0.2.1)

- Major rewrite of the high-level API. There is no more need to use the low-level API anymore.

- No more callbacks: Sending requests follows the ASIO asynchronous model.

- Support for reconnection: Pending requests are not canceled when a connection is lost and are re-sent when a new one is established.

- The library is not sending HELLO-3 on user behalf anymore. This is important to support AUTH properly.

- Adds reconnect coroutine in the

echo_serverexample. (v0.1.2) - Corrects

client::async_wait_for_datawithmake_parallel_groupto launch operation. (v0.1.2) - Improvements in the documentation. (v0.1.2)

- Avoids dynamic memory allocation in the client class after reconnection. (v0.1.2)

- Improves the documentation and adds some features to the high-level client. (v.0.1.1)

- Improvements in the design and documentation.

- First release to collect design feedback.