-

Notifications

You must be signed in to change notification settings - Fork 51

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Sr/new master #414

Sr/new master #414

Conversation

## What changes were proposed in this pull request?

Let's see the follow example:

```

val location = "/tmp/t"

val df = spark.range(10).toDF("id")

df.write.format("parquet").saveAsTable("tbl")

spark.sql("CREATE VIEW view1 AS SELECT id FROM tbl")

spark.sql(s"CREATE TABLE tbl2(ID long) USING parquet location $location")

spark.sql("INSERT OVERWRITE TABLE tbl2 SELECT ID FROM view1")

println(spark.read.parquet(location).schema)

spark.table("tbl2").show()

```

The output column name in schema will be `id` instead of `ID`, thus the last query shows nothing from `tbl2`.

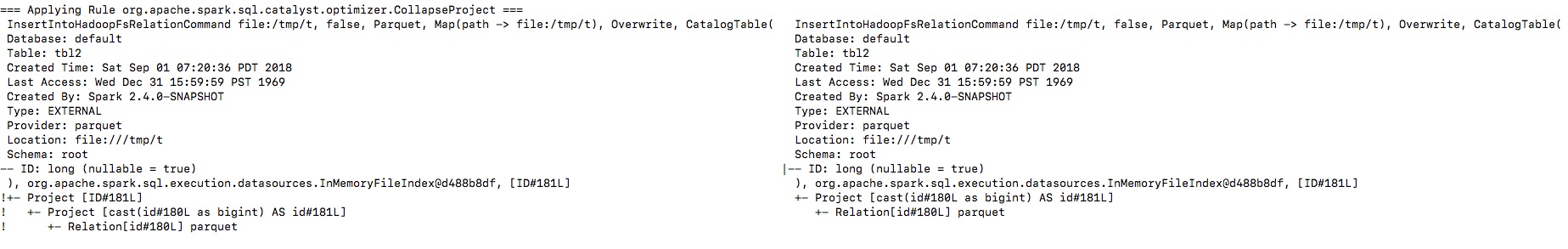

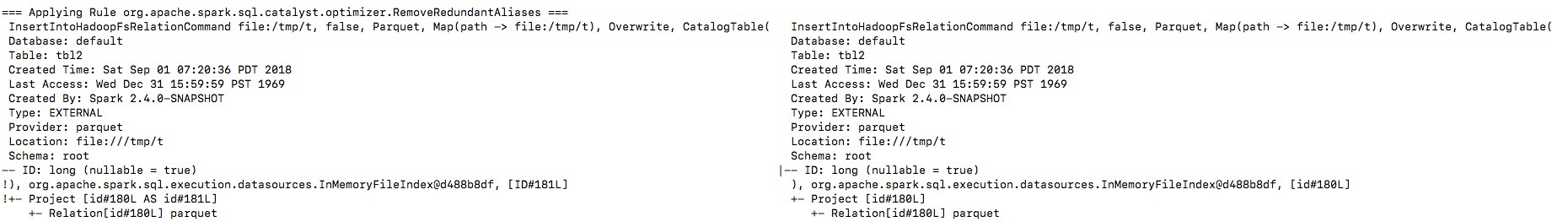

By enabling the debug message we can see that the output naming is changed from `ID` to `id`, and then the `outputColumns` in `InsertIntoHadoopFsRelationCommand` is changed in `RemoveRedundantAliases`.

**To guarantee correctness**, we should change the output columns from `Seq[Attribute]` to `Seq[String]` to avoid its names being replaced by optimizer.

I will fix project elimination related rules in apache#22311 after this one.

## How was this patch tested?

Unit test.

Closes apache#22320 from gengliangwang/fixOutputSchema.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request? `HiveExternalCatalogVersionsSuite` Scala-2.12 test has been failing due to class path issue. It is marked as `ABORTED` because it fails at `beforeAll` during data population stage. - https://amplab.cs.berkeley.edu/jenkins/view/Spark%20QA%20Test%20(Dashboard)/job/spark-master-test-maven-hadoop-2.7-ubuntu-scala-2.12/ ``` org.apache.spark.sql.hive.HiveExternalCatalogVersionsSuite *** ABORTED *** Exception encountered when invoking run on a nested suite - spark-submit returned with exit code 1. ``` The root cause of the failure is that `runSparkSubmit` mixes 2.4.0-SNAPSHOT classes and old Spark (2.1.3/2.2.2/2.3.1) together during `spark-submit`. This PR aims to provide `non-test` mode execution mode to `runSparkSubmit` by removing the followings. - SPARK_TESTING - SPARK_SQL_TESTING - SPARK_PREPEND_CLASSES - SPARK_DIST_CLASSPATH Previously, in the class path, new Spark classes are behind the old Spark classes. So, new ones are unseen. However, Spark 2.4.0 reveals this bug due to the recent data source class changes. ## How was this patch tested? Manual test. After merging, it will be tested via Jenkins. ```scala $ dev/change-scala-version.sh 2.12 $ build/mvn -DskipTests -Phive -Pscala-2.12 clean package $ build/mvn -Phive -Pscala-2.12 -Dtest=none -DwildcardSuites=org.apache.spark.sql.hive.HiveExternalCatalogVersionsSuite test ... HiveExternalCatalogVersionsSuite: - backward compatibility ... Tests: succeeded 1, failed 0, canceled 0, ignored 0, pending 0 All tests passed. ``` Closes apache#22340 from dongjoon-hyun/SPARK-25337. Authored-by: Dongjoon Hyun <dongjoon@apache.org> Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

In the PR, I propose to extended `to_json` and support any types as element types of input arrays. It should allow converting arrays of primitive types and arrays of arrays. For example:

```

select to_json(array('1','2','3'))

> ["1","2","3"]

select to_json(array(array(1,2,3),array(4)))

> [[1,2,3],[4]]

```

## How was this patch tested?

Added a couple sql tests for arrays of primitive type and of arrays. Also I added round trip test `from_json` -> `to_json`.

Closes apache#22226 from MaxGekk/to_json-array.

Authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request? SPARK-10399 introduced a performance regression on the hash computation for UTF8String. The regression can be evaluated with the code attached in the JIRA. That code runs in about 120 us per method on my laptop (MacBook Pro 2.5 GHz Intel Core i7, RAM 16 GB 1600 MHz DDR3) while the code from branch 2.3 takes on the same machine about 45 us for me. After the PR, the code takes about 45 us on the master branch too. ## How was this patch tested? running the perf test from the JIRA Closes apache#22338 from mgaido91/SPARK-25317. Authored-by: Marco Gaido <marcogaido91@gmail.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com>

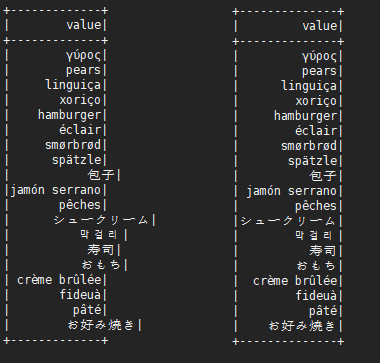

…alignment problem This is not a perfect solution. It is designed to minimize complexity on the basis of solving problems. It is effective for English, Chinese characters, Japanese, Korean and so on. ```scala before: +---+---------------------------+-------------+ |id |中国 |s2 | +---+---------------------------+-------------+ |1 |ab |[a] | |2 |null |[中国, abc] | |3 |ab1 |[hello world]| |4 |か行 きゃ(kya) きゅ(kyu) きょ(kyo) |[“中国] | |5 |中国(你好)a |[“中(国), 312] | |6 |中国山(东)服务区 |[“中(国)] | |7 |中国山东服务区 |[中(国)] | |8 | |[中国] | +---+---------------------------+-------------+ after: +---+-----------------------------------+----------------+ |id |中国 |s2 | +---+-----------------------------------+----------------+ |1 |ab |[a] | |2 |null |[中国, abc] | |3 |ab1 |[hello world] | |4 |か行 きゃ(kya) きゅ(kyu) きょ(kyo) |[“中国] | |5 |中国(你好)a |[“中(国), 312]| |6 |中国山(东)服务区 |[“中(国)] | |7 |中国山东服务区 |[中(国)] | |8 | |[中国] | +---+-----------------------------------+----------------+ ``` ## What changes were proposed in this pull request? When there are wide characters such as Chinese characters or Japanese characters in the data, the show method has a alignment problem. Try to fix this problem. ## How was this patch tested? (Please explain how this patch was tested. E.g. unit tests, integration tests, manual tests)  Please review http://spark.apache.org/contributing.html before opening a pull request. Closes apache#22048 from xuejianbest/master. Authored-by: xuejianbest <384329882@qq.com> Signed-off-by: Sean Owen <sean.owen@databricks.com>

…ouping key in group aggregate pandas UDF ## What changes were proposed in this pull request? This PR proposes to add another example for multiple grouping key in group aggregate pandas UDF since this feature could make users still confused. ## How was this patch tested? Manually tested and documentation built. Closes apache#22329 from HyukjinKwon/SPARK-25328. Authored-by: hyukjinkwon <gurwls223@apache.org> Signed-off-by: Bryan Cutler <cutlerb@gmail.com>

…lization Exception ## What changes were proposed in this pull request? mapValues in scala is currently not serializable. To avoid the serialization issue while running pageRank, we need to use map instead of mapValues. Please review http://spark.apache.org/contributing.html before opening a pull request. Closes apache#22271 from shahidki31/master_latest. Authored-by: Shahid <shahidki31@gmail.com> Signed-off-by: Joseph K. Bradley <joseph@databricks.com>

## What changes were proposed in this pull request? Add value length check in `_create_row`, forbid extra value for custom Row in PySpark. ## How was this patch tested? New UT in pyspark-sql Closes apache#22140 from xuanyuanking/SPARK-25072. Lead-authored-by: liyuanjian <liyuanjian@baidu.com> Co-authored-by: Yuanjian Li <xyliyuanjian@gmail.com> Signed-off-by: Bryan Cutler <cutlerb@gmail.com>

## What changes were proposed in this pull request? Currently when running Spark on Kubernetes a logger is run by the client that watches the K8S API for events related to the Driver pod and logs them. However for the container status aspect of the logging this simply dumps the raw object which is not human readable e.g.   This is despite the fact that the logging class in question actually has methods to pretty print this information but only invokes these at the end of a job. This PR improves the logging to always use the pretty printing methods, additionally modifying them to include further useful information provided by the K8S API. A similar issue also exists when tasks are lost that will be addressed by further commits to this PR - [x] Improved `LoggingPodStatusWatcher` - [x] Improved container status on task failure ## How was this patch tested? Built and launched jobs with the updated Spark client and observed the new human readable output:     Suggested reviewers: liyinan926 mccheah Author: Rob Vesse <rvesse@dotnetrdf.org> Closes apache#22215 from rvesse/SPARK-25222.

## What changes were proposed in this pull request? The default behaviour of Spark on K8S currently is to create `emptyDir` volumes to back `SPARK_LOCAL_DIRS`. In some environments e.g. diskless compute nodes this may actually hurt performance because these are backed by the Kubelet's node storage which on a diskless node will typically be some remote network storage. Even if this is enterprise grade storage connected via a high speed interconnect the way Spark uses these directories as scratch space (lots of relatively small short lived files) has been observed to cause serious performance degradation. Therefore we would like to provide the option to use K8S's ability to instead back these `emptyDir` volumes with `tmpfs`. Therefore this PR adds a configuration option that enables `SPARK_LOCAL_DIRS` to be backed by Memory backed `emptyDir` volumes rather than the default. Documentation is added to describe both the default behaviour plus this new option and its implications. One of which is that scratch space then counts towards your pods memory limits and therefore users will need to adjust their memory requests accordingly. *NB* - This is an alternative version of PR apache#22256 reduced to just the `tmpfs` piece ## How was this patch tested? Ran with this option in our diskless compute environments to verify functionality Author: Rob Vesse <rvesse@dotnetrdf.org> Closes apache#22323 from rvesse/SPARK-25262-tmpfs.

## What changes were proposed in this pull request? This is a follow-up pr of apache#22200. When casting to decimal type, if `Cast.canNullSafeCastToDecimal()`, overflow won't happen, so we don't need to check the result of `Decimal.changePrecision()`. ## How was this patch tested? Existing tests. Closes apache#22352 from ueshin/issues/SPARK-25208/reduce_code_size. Authored-by: Takuya UESHIN <ueshin@databricks.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request? How to reproduce permission issue: ```sh # build spark ./dev/make-distribution.sh --name SPARK-25330 --tgz -Phadoop-2.7 -Phive -Phive-thriftserver -Pyarn tar -zxf spark-2.4.0-SNAPSHOT-bin-SPARK-25330.tar && cd spark-2.4.0-SNAPSHOT-bin-SPARK-25330 export HADOOP_PROXY_USER=user_a bin/spark-sql export HADOOP_PROXY_USER=user_b bin/spark-sql ``` ```java Exception in thread "main" java.lang.RuntimeException: org.apache.hadoop.security.AccessControlException: Permission denied: user=user_b, access=EXECUTE, inode="/tmp/hive-$%7Buser.name%7D/user_b/668748f2-f6c5-4325-a797-fd0a7ee7f4d4":user_b:hadoop:drwx------ at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.check(FSPermissionChecker.java:319) at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.checkTraverse(FSPermissionChecker.java:259) at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.checkPermission(FSPermissionChecker.java:205) at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.checkPermission(FSPermissionChecker.java:190) ``` The issue occurred in this commit: apache/hadoop@feb886f. This pr revert Hadoop 2.7 to 2.7.3 to avoid this issue. ## How was this patch tested? unit tests and manual tests. Closes apache#22327 from wangyum/SPARK-25330. Authored-by: Yuming Wang <yumwang@ebay.com> Signed-off-by: Sean Owen <sean.owen@databricks.com>

…itions parameter ## What changes were proposed in this pull request? This adds a test following apache#21638 ## How was this patch tested? Existing tests and new test. Closes apache#22356 from srowen/SPARK-22357.2. Authored-by: Sean Owen <sean.owen@databricks.com> Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request? This pr removed the method `updateBytesReadWithFileSize` in `FileScanRDD` because it computes input metrics by file size supported in Hadoop 2.5 and earlier. The current Spark does not support the versions, so it causes wrong input metric numbers. This is rework from apache#22232. Closes apache#22232 ## How was this patch tested? Added tests in `FileBasedDataSourceSuite`. Closes apache#22324 from maropu/pr22232-2. Lead-authored-by: dujunling <dujunling@huawei.com> Co-authored-by: Takeshi Yamamuro <yamamuro@apache.org> Signed-off-by: Sean Owen <sean.owen@databricks.com>

…ases of sql/core and sql/hive ## What changes were proposed in this pull request? In SharedSparkSession and TestHive, we need to disable the rule ConvertToLocalRelation for better test case coverage. ## How was this patch tested? Identify the failures after excluding "ConvertToLocalRelation" rule. Closes apache#22270 from dilipbiswal/SPARK-25267-final. Authored-by: Dilip Biswal <dbiswal@us.ibm.com> Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request? Add test cases for fromString ## How was this patch tested? N/A Closes apache#22345 from gatorsmile/addTest. Authored-by: Xiao Li <gatorsmile@gmail.com> Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request? Before Apache Spark 2.3, table properties were ignored when writing data to a hive table(created with STORED AS PARQUET/ORC syntax), because the compression configurations were not passed to the FileFormatWriter in hadoopConf. Then it was fixed in apache#20087. But actually for CTAS with USING PARQUET/ORC syntax, table properties were ignored too when convertMastore, so the test case for CTAS not supported. Now it has been fixed in apache#20522 , the test case should be enabled too. ## How was this patch tested? This only re-enables the test cases of previous PR. Closes apache#22302 from fjh100456/compressionCodec. Authored-by: fjh100456 <fu.jinhua6@zte.com.cn> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

…ined names ## What changes were proposed in this pull request? Add [flake8](http://flake8.pycqa.org) tests to find Python syntax errors and undefined names. __E901,E999,F821,F822,F823__ are the "_showstopper_" flake8 issues that can halt the runtime with a SyntaxError, NameError, etc. Most other flake8 issues are merely "style violations" -- useful for readability but they do not effect runtime safety. * F821: undefined name `name` * F822: undefined name `name` in `__all__` * F823: local variable name referenced before assignment * E901: SyntaxError or IndentationError * E999: SyntaxError -- failed to compile a file into an Abstract Syntax Tree ## How was this patch tested? $ __flake8 . --count --select=E901,E999,F821,F822,F823 --show-source --statistics__ $ __flake8 . --count --exit-zero --max-complexity=10 --max-line-length=127 --statistics__ Please review http://spark.apache.org/contributing.html before opening a pull request. Closes apache#22266 from cclauss/patch-3. Authored-by: cclauss <cclauss@bluewin.ch> Signed-off-by: Holden Karau <holden@pigscanfly.ca>

## What changes were proposed in this pull request? Fix unused imports & outdated comments on `kafka-0-10-sql` module. (Found while I was working on [SPARK-23539](apache#22282)) ## How was this patch tested? Existing unit tests. Closes apache#22342 from dongjinleekr/feature/fix-kafka-sql-trivials. Authored-by: Lee Dongjin <dongjin@apache.org> Signed-off-by: Sean Owen <sean.owen@databricks.com>

…se in executors REST API Add new executor level memory metrics (JVM used memory, on/off heap execution memory, on/off heap storage memory, on/off heap unified memory, direct memory, and mapped memory), and expose via the executors REST API. This information will help provide insight into how executor and driver JVM memory is used, and for the different memory regions. It can be used to help determine good values for spark.executor.memory, spark.driver.memory, spark.memory.fraction, and spark.memory.storageFraction. ## What changes were proposed in this pull request? An ExecutorMetrics class is added, with jvmUsedHeapMemory, jvmUsedNonHeapMemory, onHeapExecutionMemory, offHeapExecutionMemory, onHeapStorageMemory, and offHeapStorageMemory, onHeapUnifiedMemory, offHeapUnifiedMemory, directMemory and mappedMemory. The new ExecutorMetrics is sent by executors to the driver as part of the Heartbeat. A heartbeat is added for the driver as well, to collect these metrics for the driver. The EventLoggingListener store information about the peak values for each metric, per active stage and executor. When a StageCompleted event is seen, a StageExecutorsMetrics event will be logged for each executor, with peak values for the stage. The AppStatusListener records the peak values for each memory metric. The new memory metrics are added to the executors REST API. ## How was this patch tested? New unit tests have been added. This was also tested on our cluster. Author: Edwina Lu <edlu@linkedin.com> Author: Imran Rashid <irashid@cloudera.com> Author: edwinalu <edwina.lu@gmail.com> Closes apache#21221 from edwinalu/SPARK-23429.2.

## What changes were proposed in this pull request?

This took me a while to debug and find out. Looks we better at least leave a debug log that SQL text for a view will be used.

Here's how I got there:

**Hive:**

```

CREATE TABLE emp AS SELECT 'user' AS name, 'address' as address;

CREATE DATABASE d100;

CREATE FUNCTION d100.udf100 AS 'org.apache.hadoop.hive.ql.udf.generic.GenericUDFUpper';

CREATE VIEW testview AS SELECT d100.udf100(name) FROM default.emp;

```

**Spark:**

```

sql("SELECT * FROM testview").show()

```

```

scala> sql("SELECT * FROM testview").show()

org.apache.spark.sql.AnalysisException: Undefined function: 'd100.udf100'. This function is neither a registered temporary function nor a permanent function registered in the database 'default'.; line 1 pos 7

```

Under the hood, it actually makes sense since the view is defined as `SELECT d100.udf100(name) FROM default.emp;` and Hive API:

```

org.apache.hadoop.hive.ql.metadata.Table.getViewExpandedText()

```

This returns a wrongly qualified SQL string for the view as below:

```

SELECT `d100.udf100`(`emp`.`name`) FROM `default`.`emp`

```

which works fine in Hive but not in Spark.

## How was this patch tested?

Manually:

```

18/09/06 19:32:48 DEBUG HiveSessionCatalog: 'SELECT `d100.udf100`(`emp`.`name`) FROM `default`.`emp`' will be used for the view(testview).

```

Closes apache#22351 from HyukjinKwon/minor-debug.

Authored-by: hyukjinkwon <gurwls223@apache.org>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request? Deprecate public APIs from ImageSchema. ## How was this patch tested? N/A Closes apache#22349 from WeichenXu123/image_api_deprecate. Authored-by: WeichenXu <weichen.xu@databricks.com> Signed-off-by: Xiangrui Meng <meng@databricks.com>

…UDFSuite ## What changes were proposed in this pull request? At Spark 2.0.0, SPARK-14335 adds some [commented-out test coverages](https://github.com/apache/spark/pull/12117/files#diff-dd4b39a56fac28b1ced6184453a47358R177 ). This PR enables them because it's supported since 2.0.0. ## How was this patch tested? Pass the Jenkins with re-enabled test coverage. Closes apache#22363 from dongjoon-hyun/SPARK-25375. Authored-by: Dongjoon Hyun <dongjoon@apache.org> Signed-off-by: gatorsmile <gatorsmile@gmail.com>

…expressions. ## What changes were proposed in this pull request? Add new optimization rule to eliminate unnecessary shuffling by flipping adjacent Window expressions. ## How was this patch tested? Tested with unit tests, integration tests, and manual tests. Closes apache#17899 from ptkool/adjacent_window_optimization. Authored-by: ptkool <michael.styles@shopify.com> Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request? Add spark.executor.pyspark.memory limit for K8S ## How was this patch tested? Unit and Integration tests Closes apache#22298 from ifilonenko/SPARK-25021. Authored-by: Ilan Filonenko <if56@cornell.edu> Signed-off-by: Holden Karau <holden@pigscanfly.ca>

## What changes were proposed in this pull request? When running TPC-DS benchmarks on 2.4 release, npoggi and winglungngai saw more than 10% performance regression on the following queries: q67, q24a and q24b. After we applying the PR apache#22338, the performance regression still exists. If we revert the changes in apache#19222, npoggi and winglungngai found the performance regression was resolved. Thus, this PR is to revert the related changes for unblocking the 2.4 release. In the future release, we still can continue the investigation and find out the root cause of the regression. ## How was this patch tested? The existing test cases Closes apache#22361 from gatorsmile/revertMemoryBlock. Authored-by: gatorsmile <gatorsmile@gmail.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request? Remove `BisectingKMeansModel.setDistanceMeasure` method. In `BisectingKMeansModel` set this param is meaningless. ## How was this patch tested? N/A Closes apache#22360 from WeichenXu123/bkmeans_update. Authored-by: WeichenXu <weichen.xu@databricks.com> Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

How to reproduce:

```scala

val df1 = spark.createDataFrame(Seq(

(1, 1)

)).toDF("a", "b").withColumn("c", lit(null).cast("int"))

val df2 = df1.union(df1).withColumn("d", spark_partition_id).filter($"c".isNotNull)

df2.show

+---+---+----+---+

| a| b| c| d|

+---+---+----+---+

| 1| 1|null| 0|

| 1| 1|null| 1|

+---+---+----+---+

```

`filter($"c".isNotNull)` was transformed to `(null <=> c#10)` before apache#19201, but it is transformed to `(c#10 = null)` since apache#20155. This pr revert it to `(null <=> c#10)` to fix this issue.

## How was this patch tested?

unit tests

Closes apache#22368 from wangyum/SPARK-25368.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

… for ORC native data source table persisted in metastore

## What changes were proposed in this pull request?

Apache Spark doesn't create Hive table with duplicated fields in both case-sensitive and case-insensitive mode. However, if Spark creates ORC files in case-sensitive mode first and create Hive table on that location, where it's created. In this situation, field resolution should fail in case-insensitive mode. Otherwise, we don't know which columns will be returned or filtered. Previously, SPARK-25132 fixed the same issue in Parquet.

Here is a simple example:

```

val data = spark.range(5).selectExpr("id as a", "id * 2 as A")

spark.conf.set("spark.sql.caseSensitive", true)

data.write.format("orc").mode("overwrite").save("/user/hive/warehouse/orc_data")

sql("CREATE TABLE orc_data_source (A LONG) USING orc LOCATION '/user/hive/warehouse/orc_data'")

spark.conf.set("spark.sql.caseSensitive", false)

sql("select A from orc_data_source").show

+---+

| A|

+---+

| 3|

| 2|

| 4|

| 1|

| 0|

+---+

```

See apache#22148 for more details about parquet data source reader.

## How was this patch tested?

Unit tests added.

Closes apache#22262 from seancxmao/SPARK-25175.

Authored-by: seancxmao <seancxmao@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

…ema in Parquet issue

## What changes were proposed in this pull request?

How to reproduce:

```scala

spark.sql("CREATE TABLE tbl(id long)")

spark.sql("INSERT OVERWRITE TABLE tbl VALUES 4")

spark.sql("CREATE VIEW view1 AS SELECT id FROM tbl")

spark.sql(s"INSERT OVERWRITE LOCAL DIRECTORY '/tmp/spark/parquet' " +

"STORED AS PARQUET SELECT ID FROM view1")

spark.read.parquet("/tmp/spark/parquet").schema

scala> spark.read.parquet("/tmp/spark/parquet").schema

res10: org.apache.spark.sql.types.StructType = StructType(StructField(id,LongType,true))

```

The schema should be `StructType(StructField(ID,LongType,true))` as we `SELECT ID FROM view1`.

This pr fix this issue.

## How was this patch tested?

unit tests

Closes apache#22359 from wangyum/SPARK-25313-FOLLOW-UP.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

…enabled ## What changes were proposed in this pull request? With flag `IGNORE_CORRUPT_FILES` enabled, schema inference should ignore corrupt Avro files, which is consistent with Parquet and Orc data source. ## How was this patch tested? Unit test Closes apache#22611 from gengliangwang/ignoreCorruptAvro. Authored-by: Gengliang Wang <gengliang.wang@databricks.com> Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request? This PR aims to add `BloomFilterBenchmark`. For ORC data source, Apache Spark has been supporting for a long time. For Parquet data source, it's expected to be added with next Parquet release update. ## How was this patch tested? Manual. ```scala SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.BloomFilterBenchmark" ``` Closes apache#22605 from dongjoon-hyun/SPARK-25589. Authored-by: Dongjoon Hyun <dongjoon@apache.org> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request? Refactor `UnsafeArrayDataBenchmark` to use main method. Generate benchmark result: ```sh SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.UnsafeArrayDataBenchmark" ``` ## How was this patch tested? manual tests Closes apache#22491 from wangyum/SPARK-25483. Lead-authored-by: Yuming Wang <yumwang@ebay.com> Co-authored-by: Dongjoon Hyun <dongjoon@apache.org> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request? In apache#20850 when writing non-null decimals, instead of zero-ing all the 16 allocated bytes, we zero-out only the padding bytes. Since we always allocate 16 bytes, if the number of bytes needed for a decimal is lower than 9, then this means that the bytes between 8 and 16 are not zero-ed. I see 2 solutions here: - we can zero-out all the bytes in advance as it was done before apache#20850 (safer solution IMHO); - we can allocate only the needed bytes (may be a bit more efficient in terms of memory used, but I have not investigated the feasibility of this option). Hence I propose here the first solution in order to fix the correctness issue. We can eventually switch to the second if we think is more efficient later. ## How was this patch tested? Running the test attached in the JIRA + added UT Closes apache#22602 from mgaido91/SPARK-25582. Authored-by: Marco Gaido <marcogaido91@gmail.com> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

…ClosureCleaner ## What changes were proposed in this pull request? Cause: Recently test_glr_summary failed for PR of SPARK-25118, which enables spark-shell to run with default log level. It failed because this logdebug was called for GeneralizedLinearRegressionTrainingSummary which invoked its toString method, which started a Spark Job and ended up running into an infinite loop. Fix: Remove logDebug statement for outer objects as closures aren't implemented with outerclasses in Scala 2.12 and this debug statement looses its purpose ## How was this patch tested? Ran python pyspark-ml tests on top of PR for SPARK-25118 and ClosureCleaner unit tests Closes apache#22616 from ankuriitg/ankur/SPARK-25586. Authored-by: ankurgupta <ankur.gupta@cloudera.com> Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

…for SQL Statement

## What changes were proposed in this pull request?

This PR proposes to register Grouped aggregate UDF Vectorized UDFs for SQL Statement, for instance:

```python

from pyspark.sql.functions import pandas_udf, PandasUDFType

pandas_udf("integer", PandasUDFType.GROUPED_AGG)

def sum_udf(v):

return v.sum()

spark.udf.register("sum_udf", sum_udf)

q = "SELECT v2, sum_udf(v1) FROM VALUES (3, 0), (2, 0), (1, 1) tbl(v1, v2) GROUP BY v2"

spark.sql(q).show()

```

```

+---+-----------+

| v2|sum_udf(v1)|

+---+-----------+

| 1| 1|

| 0| 5|

+---+-----------+

```

## How was this patch tested?

Manual test and unit test.

Closes apache#22620 from HyukjinKwon/SPARK-25601.

Authored-by: hyukjinkwon <gurwls223@apache.org>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

…for SQL Statement

## What changes were proposed in this pull request?

This PR proposes to register Grouped aggregate UDF Vectorized UDFs for SQL Statement, for instance:

```python

from pyspark.sql.functions import pandas_udf, PandasUDFType

pandas_udf("integer", PandasUDFType.GROUPED_AGG)

def sum_udf(v):

return v.sum()

spark.udf.register("sum_udf", sum_udf)

q = "SELECT v2, sum_udf(v1) FROM VALUES (3, 0), (2, 0), (1, 1) tbl(v1, v2) GROUP BY v2"

spark.sql(q).show()

```

```

+---+-----------+

| v2|sum_udf(v1)|

+---+-----------+

| 1| 1|

| 0| 5|

+---+-----------+

```

## How was this patch tested?

Manual test and unit test.

Closes apache#22620 from HyukjinKwon/SPARK-25601.

Authored-by: hyukjinkwon <gurwls223@apache.org>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

|

So after apache#22266, we need |

|

@sjrand Dockerfiles for build containers are in this repo. Ping me so I can get you the creds to publish them to dockerhub. I think they changed the version on master so you need to make sure it's updated everywhere |

…nput when not necessary ## What changes were proposed in this pull request? In `SparkPlan.getByteArrayRdd`, we should only call `it.hasNext` when the limit is not hit, as `iter.hasNext` may produce one row and buffer it, and cause wrong metrics. ## How was this patch tested? new tests Closes apache#22621 from cloud-fan/range. Authored-by: Wenchen Fan <wenchen@databricks.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request? Refactor `DatasetBenchmark` to use main method. Generate benchmark result: ```sh SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.DatasetBenchmark" ``` ## How was this patch tested? manual tests Closes apache#22488 from wangyum/SPARK-25479. Lead-authored-by: Yuming Wang <yumwang@ebay.com> Co-authored-by: Dongjoon Hyun <dongjoon@apache.org> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

…g against Object store. ## What changes were proposed in this pull request? Original work by Steve Loughran. Based on apache#17745. This is a minimal patch of changes to FileInputDStream to reduce File status requests when querying files. Each call to file status is 3+ http calls to object store. This patch eliminates the need for it, by using FileStatus objects. This is a minor optimisation when working with filesystems, but significant when working with object stores. ## How was this patch tested? Tests included. Existing tests pass. Closes apache#22339 from ScrapCodes/PR_17745. Lead-authored-by: Prashant Sharma <prashant@apache.org> Co-authored-by: Steve Loughran <stevel@hortonworks.com> Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request? The PR changes the test introduced for SPARK-22226, so that we don't run analysis and optimization on the plan. The scope of the test is code generation and running the above mentioned operation is expensive and useless for the test. The UT was also moved to the `CodeGenerationSuite` which is a better place given the scope of the test. ## How was this patch tested? running the UT before SPARK-22226 fails, after it passes. The execution time is about 50% the original one. On my laptop this means that the test now runs in about 23 seconds (instead of 50 seconds). Closes apache#22629 from mgaido91/SPARK-25609. Authored-by: Marco Gaido <marcogaido91@gmail.com> Signed-off-by: gatorsmile <gatorsmile@gmail.com>

…nkins ## What changes were proposed in this pull request? Reduce `DateExpressionsSuite.Hour` test time costs in Jenkins by reduce iteration times. ## How was this patch tested? Manual tests on my local machine. before: ``` - Hour (34 seconds, 54 milliseconds) ``` after: ``` - Hour (2 seconds, 697 milliseconds) ``` Closes apache#22632 from wangyum/SPARK-25606. Authored-by: Yuming Wang <yumwang@ebay.com> Signed-off-by: gatorsmile <gatorsmile@gmail.com>

…of timezones ## What changes were proposed in this pull request? The test `cast string to timestamp` used to run for all time zones. So it run for more than 600 times. Running the tests for a significant subset of time zones is probably good enough and doing this in a randomized manner enforces anyway that we are going to test all time zones in different runs. ## How was this patch tested? the test time reduces to 11 seconds from more than 2 minutes Closes apache#22631 from mgaido91/SPARK-25605. Authored-by: Marco Gaido <marcogaido91@gmail.com> Signed-off-by: gatorsmile <gatorsmile@gmail.com>

While working on another PR, I noticed that there is quite some legacy Java in there that can be beautified. For example the use og features from Java8, such as: - Collection libraries - Try-with-resource blocks No code has been changed What are your thoughts on this? This makes code easier to read, and using try-with-resource makes is less likely to forget to close something. ## What changes were proposed in this pull request? (Please fill in changes proposed in this fix) ## How was this patch tested? (Please explain how this patch was tested. E.g. unit tests, integration tests, manual tests) (If this patch involves UI changes, please attach a screenshot; otherwise, remove this) Please review http://spark.apache.org/contributing.html before opening a pull request. Closes apache#22399 from Fokko/SPARK-25408. Authored-by: Fokko Driesprong <fokkodriesprong@godatadriven.com> Signed-off-by: Sean Owen <sean.owen@databricks.com>

This reverts commit 44c1e1a.

|

Finally have everything green except for the scala tests. I suspect that the failures in |

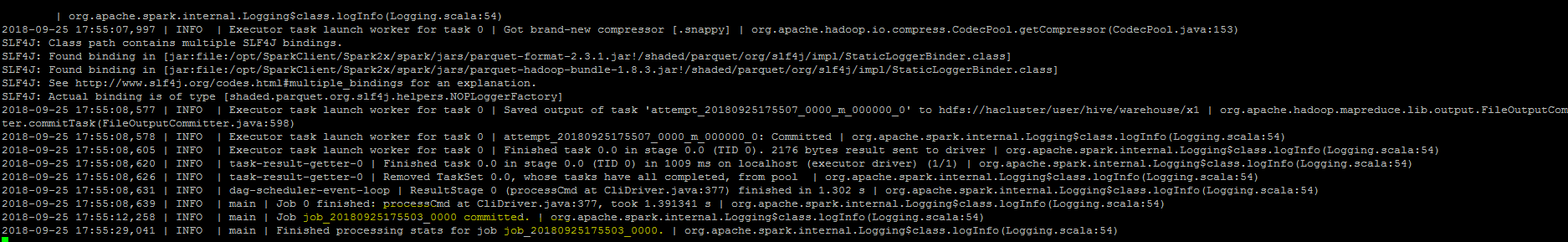

…ommand Job is finished. ## What changes were proposed in this pull request? ``As part of insert command in FileFormatWriter, a job context is created for handling the write operation , While initializing the job context using setupJob() API in HadoopMapReduceCommitProtocol , we set the jobid in the Jobcontext configuration.In FileFormatWriter since we are directly getting the jobId from the map reduce JobContext the job id will come as null while adding the log. As a solution we shall get the jobID from the configuration of the map reduce Jobcontext.`` ## How was this patch tested? Manually, verified the logs after the changes.  Closes apache#22572 from sujith71955/master_log_issue. Authored-by: s71955 <sujithchacko.2010@gmail.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com>

Hi all, Jackson is incompatible with upstream versions, therefore bump the Jackson version to a more recent one. I bumped into some issues with Azure CosmosDB that is using a more recent version of Jackson. This can be fixed by adding exclusions and then it works without any issues. So no breaking changes in the API's. I would also consider bumping the version of Jackson in Spark. I would suggest to keep up to date with the dependencies, since in the future this issue will pop up more frequently. ## What changes were proposed in this pull request? Bump Jackson to 2.9.6 ## How was this patch tested? Compiled and tested it locally to see if anything broke. Please review http://spark.apache.org/contributing.html before opening a pull request. Closes apache#21596 from Fokko/fd-bump-jackson. Authored-by: Fokko Driesprong <fokkodriesprong@godatadriven.com> Signed-off-by: hyukjinkwon <gurwls223@apache.org>

Conflicts resolved in last commit.